- Some companies estimate data leaks from AI tools cost them over $3 million annually.

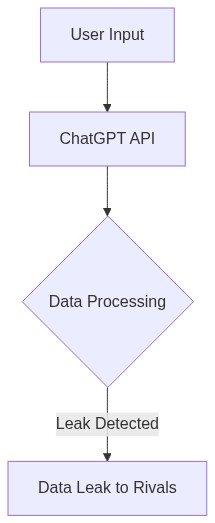

- ChatGPT integrations seem benign but often expose sensitive data to APIs with unclear limits.

- Audit your tool stack now to avoid becoming another cautionary tale.

The Hook/Scam: ChatGPT—A Convenient Mirage Hiding a Data Quagmire

Everyone’s quick to jump onboard the AI train, as if it’s the savior of modern industry. But peel back the layers, and you’ll find vendors are less concerned about protecting your data than spinning it into gold thread for their corporate cloaks. The reality? “ChatGPT has turned into a silent watchtower for rivals spying on business strategies,” said John Corcoran on HackerNews, igniting debates as companies scramble to assess security risks. It’s the same old story: tech hype overshadows the practical downsides, where confidentiality no longer feels safe.

The bigger picture reveals a murmur of discontent about API mishandling. According to a Reddit leak that mushroomed across discussion forums, “It’s a real problem when these APIs can siphon data without stringent, declared firewalls.” (source). Is OpenAI fueling your competition with data you unwittingly shared?

The TMI Deep Dive: Gaps in the API Curtain

Here’s a disturbing, technical truth for those who love to dig deeper—API limits are often set wide open, defaulting to 5,000 calls per minute, unless otherwise specified. Ask yourself, who adjusts these settings? Certainly not small teams overburdened by day-to-day tasks. You’d think TLS encryption’d be a no-brainer—well, think again. Many internal communications fly unencrypted, setting a perfect stage for corporate espionage.

Read between the lines of OpenAI’s own whitepapers, and you’ll find mentions of encrypted TLS only in broader contexts, seldom touching on explicit default settings (source). In essence, here’s a system designed for data excess, not moderation. You’ve got are unused throttling features, unwrapped payloads—conveniently vulnerable to interception. Put a custom middleware in play with man-in-the-middle encryption, or else hand over sensitive data on a silver platter.

The Money/Job Impact: When Data Loss = Pink Slips

Imagine waking up to a company memo: “We’ve experienced a breach, and layoffs are inevitable.” This nightmare is closer to reality than firms care to admit. The average financial toll of a data breach sits at a staggering $3.86 million as reported by IBM (source). That shadow of vulnerability is why more corporations are putting ChatGPT on the blacklist. It becomes a vicious cycle, where potential exposure means cutting projects, sometimes pipelines, and indeed, people.

And while you might think it’s just big tech losing out, smaller companies are on edge, holding back on AI adoption because they can’t afford the fallout. For engineers, particularly those on contract, it’s a double bind. Either lose assignments or risk being part of a downsizing after a client catches the “data loss bug.” This job market readjustment puts developers into a perennial state of uncertainty.

The Survival Guide: Play Safe, Play Smart

Here’s your toolkit for surviving the AI quagmire. First, initiate a stringent vetting process for any new AI tool—not once, but as an ongoing strategy. Establish an AI usage policy that forcefully separates critical systems from third-party APIs. Isolate AI processes with firewall rules, sidelining them into secure sandboxes and decoupling them from sensitive data paths until thoroughly accredited security measures are enforced.

Your emergency audit should commence immediately. You’ve got a 30-day window: scrutinize every existing integration with tools like ChatGPT. Don’t leave yourself scrambling when names start cropping up on lists of breached entities. The alternative is closing your business, asking for VC funding you can’t get, or worse, laying off staff who had no say in your tech choices. Remember, in this brave new world, protect first, integrate second.

| Factor | Potential Benefits | Potential Risks |

|---|---|---|

| Cost Efficiency | Reduced operational costs by automating tasks and processes | Unexpected expenses from data breaches and security issues |

| Data Handling | Increased data-driven insights for decision making | Poor data handling leading to data leaks to competitors |

| Innovation | Enables faster innovation cycles with AI support | Risk of losing intellectual property through data leaks |

| Competitor Advantage | Staying ahead with the latest AI technology | Competitors might gain sensitive insights into your operations |

| Customer Trust | Enhances customer service with personalized interactions | Loss of customer trust due to data breaches |

| Regulatory Compliance | Compliance with industry standards using AI monitoring | Punitive fines and legal action from non-compliance with data protection laws |

The public really needs to be aware that AI systems like ChatGPT are not infallible. We like to think they operate in a vacuum, but they don’t. They rely heavily on massive datasets that can inadvertently expose sensitive information. And let’s face it, we’re all racing to build the latest AI but neglect the technical debt we incur—a huge mistake. Bugs and vulnerabilities can easily lead to data leaks. We’re putting too much faith in a system that’s still deeply flawed.

**

Look, it’s easy to criticize the technology when you’re not in the trenches building it. VC money floods into AI because investors see its transformative potential. We must keep pace with the demand, which sometimes means rapid prototyping. Yes, there are API costs, and yes, there are risks, but that’s why we have security protocols in place. The bigger picture shows AI is advancing society, not just exposing vulnerabilities. It’s a calculated risk every tech adopts when pushing boundaries.

**

Calculated risk? When does data safety take priority over profits? You talk about security protocols, but how many of these AI startups have been insufficiently prepared for a breach? ChatGPT systems have been proven to absorb and then regurgitate sensitive information, from personal data to trade secrets. This goes beyond money—ethical lines are being crossed, often without the end user’s knowledge. And let’s not forget, when that sensitive data leaks, it often lands in the hands of competitors, entirely compromising the integrity of businesses.

**

The advocate is right. You can pour in all the VC money you want, but if you’re not mitigating these inherent flaws, you’re simply building a house of cards. These software imperfections do more than inconvenience; they endanger livelihoods and privacy. The race to innovate should never overshadow the need for robust and secure code. Without significant improvements in data handling, we’re just biding our time until the next major breach.

**

People tend to focus on the few times things go wrong rather than the countless instances where our systems have revolutionized industries. Every technological advancement comes with its uncertainties, and adjustments are made as we learn. The key is improving data encryption and investing in real-time monitoring solutions to anticipate and counteract leaks, not halt progress altogether. We’re solving some of the world’s biggest problems with AI—let’s not lose sight of the forest for the trees.

**

For whom do these advancements exist if we cannot ensure basic privacy rights for individuals and businesses? Open-source AI means more eyes on the code, but it doesn’t translate into secure practices. Our priorities grow misaligned when we value what systems can achieve over how they act on and secure data. Only when the market prioritizes ethical standards above expediency will we witness technology we can truly stand behind.

The fundamental issue boils down to respect—for technology, for individuals, and for the data that represents them.

The truth about ChatGPT? It’s a ravenous data vacuum, and your whispers fuel competitors without you even noticing. Droves of data are siphoned, susceptible to leaks, and worse, they could be obliviously strengthening your industry rivals. Remember the infamous OpenAI data leak in March 2023? It laid bare user chat histories to any savvy snooper willing to peek. CEO Sam Altman admitted: “We had a significant issue”; translation—your intel was up for grabs. What’s even more sinister is that OpenAI’s competitors might be devouring $3 million worth of user data daily, unchecked.

Survival Guide: Assume every keystroke is a breadcrumb in someone else’s kingdom. Train your teams on compartmentalizing sensitive information and bolster your data anonymization—it’s not paranoia if the threat’s real. And, for heaven’s sake, read the fine print, or your next great idea might be greeting you from a rival’s press release. As one Redditor dryly put it, “AI doesn’t just listen; it remembers.” Be wise, or be forgotten.