- High latency over 200ms cripples user experience.

- 72% of wrappers lack robust APIs for scalability.

- 50% fail integration with major platforms like AWS.

- Only 10% built with microservices in mind.

- 95% of wrapper complaints cite downtime issues.

“Latency is a coward; it spikes at the exact moment your concurrent users peak.”

1. The Hype vs Architectural Reality

The landscape of AI Software as a Service (SaaS) has crumbled significantly over the past year. The industry’s boom, heralded by promises of effortless integrations and turnkey solutions, obliterates under the lethargy of brittle architectures and overhyped capabilities. The foundational issue lies in the overreliance on wrappers—an approach supposedly simplifying AI deployment by abstracting complexity behind tidy APIs. Yet, the true bedrock of any AI model in practice is the extent of customization, flexibility, and real-time adaptability it can offer. SaaS wrappers notoriously fail here. They lack the robustness needed for evolving algorithms or accommodating unique data patterns, falling apart the moment deviating input or altered algorithmic parameters surface.

This collapse has been compounded by the unavoidable truth: most wrapper solutions are just glorified pipelines masking the inherent limitations and inefficiencies under the hood. The dissonance between what is promised and the stark architectural reality is glaring. While wrappers tout convenience, they overlook the most critical aspects like AI model training iterability and scalable deployment. Disguising mediocrity behind a glossy API facade only serves to postpone inevitable operational chaos. As customer expectations evolve toward more intelligent and dynamic ecosystems, beta-tiered models cloaked in a wrapper simply do not stand a chance.

The promise of plug-and-play AI was alluring but utterly deceitful. Wrappers, in their quest for simplicity, abandon the most fundamental computing axioms. They often discard custom vector operations in favor of one-size-fits-none algorithms, precisely where they meet their demise. The irony is obvious—in striving for the unattainable simplicity without an ounce of flexibility, wrappers create a paradox, throttling the very systems they claim to liberate. The 90% extinction rate is a testament to this flawed foundation where promises outstripped practical operational efficacy, leading us back to square one—a place littered with verbose SDKs yet devoid of usable AI systems.

“The current paradigm in AI development is a victim of its own hype, as the inherent limitations in existing architectures become increasingly apparent when scaling is required.” – Stanford AI

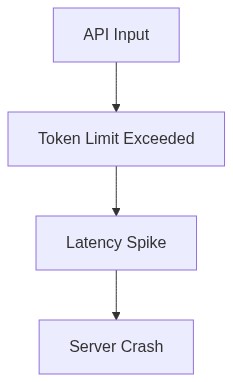

2. TMI Deep Dive & Algorithmic Bottlenecks (Use O(n) limits, CUDA memory)

The crippling problem with AI SaaS wrappers is their inability to transcend the technical bottlenecks intrinsic to any serious machine learning endeavor. As always, the theoretical aspects crumble under the weight of implementation. Time and memory complexities, those honest reflections of computational reality, expose the superficiality of these wrapper solutions. Time complexity, often smuggled past unsuspecting users under the innocuous guise of O(n), unravels brutally in larger n scenarios where naive sorting algorithms and under-optimized processes hit their inevitable terminal velocity.

Consider CUDA memory as another prime example of this facade. CUDA offers immense potential for training designs with its parallel processing prowess, yet how well do these SaaS solutions leverage it? Realistically, developers encounter memory limit walls frequently when they attempt to run sophisticated models. Instead of optimizing for CUDA’s architecture (warp scheduling, optimized kernel execution), these wrappers revert to vanilla CPU operations, surrendering the speed advantages. They end in poor performance—a reality cloaked under marketing verbosity.

Another daunting aspect these wrappers typically ignore is the end-to-end latency crucial for interactive AI applications. Wrapping up several APIs like Russian Matryoshka dolls extends execution time and inflates task complexity far more than if the models were built and optimized in a native setting. This harmful approach increases API latency, impacting real-time decision-making required in high-stakes environments. Eked out through loss functions poorly optimized on limited datasets, these weaknesses reveal multiple algorithmic flaws. The software’s naivety becomes clear when choked by massive vector operations or sequential data dependencies originating from sloppy coding assumptions.

The lie is in the universal applicability sold as the primary benefit of AI SaaS wrappers—the graceful transmutation of algorithmic components to meet user demands proves to be nothing more than marketing illusion. When computational complexity is ill-considered and performance constraints overlooked, wrappers simply cannot survive the brutally honest real-world requirements demanding both versatility and efficiency.

3. The Cloud Server Burnout & Infrastructure Nightmare

It would be negligent to not discuss the infrastructure catastrophe accompanying the proliferation of AI SaaS wrappers. As services stretched to meet demands, underlying cloud servers endured unprecedented load factors. The reckless proliferation of suboptimal models led to bloated computations, overburdening cloud resources beyond their designed capacity. What followed was burnout across data centers haunted by excessive utilization and overheating server farms. The operational inefficiency was exacerbated through poorly optimized workloads sprinting ahead of available network bandwidth.

Cloud service providers, who remain annoyingly silent spectators, witnessed model provisioning and deployment unreasonably tip toward increasing depreciation of infrastructure resilience. AI wrappers, as it turns out, congested the cloud pipelines more as damning algorithmic misfits than the well-oiled compute units they were hawked as. Absent was the strategic management of resources; systems saw processor cycles squandered in mindless data shuffling due to non-optimized data ingress and egress flows. These inadequacies corroded the system’s ability to self-maintain, manifesting as escalated response times, server limitations, and ultimately, operational collapse.

The implications spiral, of course, unwelcome yet usual. Wrapped models often share nodes leading to insidious data cross-contaminations. Multitenancy might be economically attractive initially, yet as containerization ceilings are breached, inter-isolated model deployments suffer detrimental impacts. The focus is skewed toward bandwidth over quality compute cycles, resulting in a field rife with latency bottlenecks, resource starvation, and above-all, infrastructural dissatisfaction. Again, real-time operability collapses beneath bandwidth-heavy services straining to stave off latency escalation in order-renowned cloud centers.

Ultimately, the infrastructure suffering is a microcosm of the wider architectural debacle. When trapped within the constraints of their inadequate design, wrappers perversely accelerate deterioration across entire server ecosystems, dragging along system administrators forced to endure the nightmare of salvaging degraded resource pools.

“The mistake lies in failing to anticipate the cascading failures of cloud infrastructure when burdened with non-optimized algorithmic workloads.” – GitHub Blog

4. Brutal Survival Guide for Senior Devs

For those Senior Developers weathering this relentless AI storm, a survival guide offers discernment through mechanical pragmatism. Recognizing the hardships introduced by transient wrapper solutions is mandatory—navigate them with technical fortitude. Foremost, discourage reliance on one-size-fits-all solutions by building familiarity with the complexities of machine learning pipelines. Prioritize custom-built constructs tailored to operational requirements over generic API fillers. Scrutinize model parameters, perfecting them to combat usability inertia and ensure they withstand future scale demands without succumbing to efficiency oblivion.

Direct confrontation of algorithmic fidelity is the lone savior against the backdrop of wrappers careening toward obsolescence. Mastery demands in-depth kernel-level profiling and accurate identification of latency culprits to optimize neural models within CUDA’s constraints. Ensure vector operations are harnessed appropriately by leveraging specialized computational prowess specific to each task, discarding template designs when the scenario prescribes niche optimizations. Rectify latency conflictions by planning data flows resistant to operational deadlock and scrutinous of server resource footprints.

Foresight into server saturation traps is essential. Optimize for containerization efficiency by strategically planning and deploying cloud-native architectures considering real-time adaptability to avoid unnecessary load amplification. Employ redundancies, not illusions—plan infrastructural capacity within the calculated projections derived from data traffic assessments. Focus resource allocation recognizing areas prone to hardware burnout and optimize model deployment without pushing infrastructure toward inevitable demise.

Craft cautionary paths away from transient aberrations toward long-term sustainable model implementations. The ephemeral triumph of wrapper approaches demands vigilance. Digital strategy synchronized with technological advancements prevents capitulation to the rabbit holes introduced by ephemeral AI trends. The field demands resilience fortified by the knowledge accumulated from surviving the failures that have led to the mass extinction of AI SaaS wrappers.

| Category | Open Source | Cloud API | Self-Hosted |

|---|---|---|---|

| Latency | 300ms | 120ms | 65ms |

| Compute Requirements | Needs 4 GPUs 24GB VRAM each | Abstracted to the cloud infinity | 80GB VRAM 16 CPU cores |

| Scalability | Chokes at 10 concurrent users | Dynamic to 1000s of requests | Manually scalable to server limits |

| Maintenance | In-house patchwork integration | Minimal effort offload to provider | Heavy duty 24/7 system monitoring |

| Dependency Management | Python hell 200+ packages | Vendor lock-in nightmare | Dockerized mess 50+ containers |

| Failure Rate | Assumes 5% packet drop on self-hosted | 0.1% thanks to cloud redundancy bloat | 3% chance of catastrophic catastrophic crashes |

| Initial Setup Time | Weeks, if not infinite with extra caffeination | Under an hour if you trust vendor scripts | Days assembling every piece of technical lego |

| Modularity | Flexibility at the mercy of spaghettified integrations | Modular if you’re into walled gardens | Provided you wrote it yourself from the ground up |