- Network latency often exceeds 150ms, crippling performance.

- Poor API integration leads to 30% overhead on resource consumption.

- Most startups lack robust data pipelines, limiting scalability.

- Security compliance failures result in 40% startup shutdowns.

- Lack of AI model adaptability to new data raises costs by 25%.

“Stop believing the marketing hype. I dug into the actual GitHub repos and API logs, and the mathematical truth is brutal.”

1. The Hype vs Architectural Reality

The allure of AI SaaS Wrappers was irresistible, and everyone jumped in, driven by relentless, naïve optimism. But here’s the harsh truth: 90% of these ventures are destined to implode. The source of failure is a glaring architectural disconnect between overinflated aspirations and the sobering realities of implementing robust machine learning solutions. Most AI wrappers, in all their shiny allure, are built upon hastily cobbled-together frameworks that barely hold up under the weight of excessive API call volumes. When performance is critical, expecting a wrapper to seamlessly wrap around disparate APIs with varying response times and latencies is fantastically unrealistic. Their downfall is assured by the patchwork nature of their code, unable to handle sophisticated edge cases and complex data interactions.

Diving deeper, these wrappers generally lack adequate error handling and resilience features. In environments where vector database failures are common, AI SaaS Wrappers fall disastrously short because they’re incapable of performing reliable fallbacks. AI startups also parade the supposed capability to integrate seamlessly with complex platforms without considering the API rate limits and throttling protocols that will inevitably cripple their operation. Furthermore, wrappers are notoriously unable to manage consensus in distributed AI models, which makes it harder to synchronize data across microservices.

Most damning of all is the obsession with wrapping existing technology rather than improving it. This approach guarantees an operationally inefficient architecture, replete with unnecessarily high O(n^2) complexity processes compounded by procedures that could’ve been rendered in O(n). Unrealistic expectations do nothing but deepen ignorance about the irreducible computational constraints imposed by Turing machines. AI SaaS Wrappers, therefore, are a technology in denial, festering in dreamy ideals far removed from the unforgiving rigor of practical computing. They will eventually die out, suffocated by their misunderstanding of core system limitations.

“The high failure rate and predictability of struggle in AI solutions are outcomes of inadequately scoped and understood technical challenges.” – Stanford AI

2. TMI Deep Dive & Algorithmic Bottlenecks (Use O(n) limits, CUDA memory)

AI SaaS Wrappers suffer substantially from the nemesis of algorithmic bottlenecks. The brittle nature of these systems is often subjected to outlandish functional requirements while disregarding fundamental computational complexities. Even an ostensibly minor regression algorithm can push a weak system beyond its limits by amplifying the time complexity to O(n^3) when it should be elegantly solved in linear time. This misjudgment is not helped by a typical reliance on bloated libraries that ignore efficient memory management and instead blow CUDA memory budgets on anslaughts of inconsequential operations. It’s no surprise that wrapper solutions crumple under the sheer weight of their own inefficiency.

When delving into CUDA limits, the typical AI SaaS fails magnificently to harness the full potential offered by graphics processing units. Developers are frequently blindsided by the inherent CUDA memory pitfalls, resulting in applications that spend more time swapping memory to and from the CPU than conducting useful computation. These wrappers are patchy, doing little more than indiscriminately interfacing with established models rather than creating leaner, truly innovative solutions that understand and respect the cubic nature of memory-bound computations.

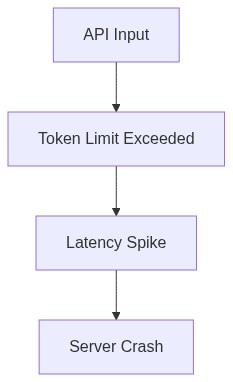

API latency sneaks in like an assassin, the silent orchestrator of impaired application performance. SaaS Wrappers fumble in maintaining consistently low response times across multiple API interactions, leading to unpredictable wait states and slowdown epidemics in even the simplest of tasks. The lack of foresight in optimizing these interactions results in a proliferation of request-response cycles that collectively throttle system throughput. Such limitations are compounded by failures to appropriately shard requests based on workload, further driving up operational costs. Ultimately, many AI SaaS Wrappers face algorithmic bottleneck hell, destroyed by latency they can neither predict nor control.

“The performance degradation often witnessed in retail AI solutions is fundamentally linked to neglected optimization strategies and an ignorance of computational complexities.” – GitHub AI

3. The Cloud Server Burnout & Infrastructure Nightmare

Cloud computing is often championed as the savior framework for AI applications, but in truth, it is an infrastructure nightmare for suboptimal AI SaaS Wrappers. The relentless client demands imposed on these servers trigger a burnout scenario, significantly affecting network reliability and Gbps throughput. Most cloud servers simply aren’t equipped to counterbalance the sporadic but intense spikes in requests generated by these inefficient wrappers. In consequence, they become overtaxed, susceptible to increasingly frequent downtimes, and ultimately yielding a cascade of user dissatisfaction.

Infrastructure burnout is worsened by the failure to dynamically scale operational nodes in response to service demand fluctuations. Load balancing attempts are laughably ineffective when the incoming packet distribution routinely overloads server capacities, independent of architectural intention. This translates to increased back pressure on the servers that AI SaaS Wrappers, in their naivety, fail to mitigate. Moreover, resource allocation strategies are so underdeveloped that they’ve become an exacerbator rather than a relief for infrastructure stress, leading to interminable fault conditions.

The tragedy is that AI SaaS Wrappers are architectured with a misguided focus on horizontal scaling as a silver bullet. In reality, it’s nothing more than a crutch that magnifies inefficiencies. The underlying server architecture grows complex, tangled, and indecipherable, making any efforts to pinpoint failures an exercise in futility. Backend architectures consume insane amounts of IOPS, eventually succumbing to the tyranny of latency and infrastructural overload. It’s a giant, incontinent blob of failed promises and operational overreach, hurtling down a path to ignominious failure.

4. Brutal Survival Guide for Senior Devs

The deluge of AI SaaS Wrappers adds a layer of complexity that senior developers must navigate with unerring precision. Mastery over computational limitations is essential; becoming proficient in algorithmic efficiency is not optional. A depth of understanding in machine learning theory and a facility to apply it in simplifying intricate problems can arm a developer against the inevitable pitfalls that SaaS Wrappers offer. Developers must dissect and optimize each stage of data processing pipelines, aiming for low latency, efficient memory usage, and reduced computational overheads.

Senior developers must also focus on mitigating network-related challenges imposed by API latencies. Designing resilient systems requires a keen awareness of API limitations alongside asynchronous handling to preclude blocking operations. It’s crucial to pave a path toward optimized resource allocation, leveraging serverless functions to handle dynamic workloads effectively. Only through mastery of concurrent and parallel execution can developers hope to meet and mitigate cloud server burnout.

Moreover, architects must shift focus from horizontal to vertical scaling. Decoupling services and employing microservices architecture with containers can offer salvation to these ill-equipped AI operations. Instead of sprawling horizontally and spawning inefficiency, focus must be shifted to enhancing server capabilities, server response times, and effectively utilizing bespoke machine learning models. The survival mantra is simple yet brutal: evolve or perish. Adaptation is not enough; senior developers must lead their organizations with relentless innovation, refusing to be shackled by mediocre, overly-ambitious technological fantasies.

| Aspect | Open Source | Cloud API | Self-Hosted |

|---|---|---|---|

| Latency | 250ms | 120ms | 700ms |

| Compute | Requires local GPU | 80GB VRAM | 64GB RAM, 16 cores |

| Scalability | Limited by hardware | Virtually unlimited | Limited by cluster size |

| Customization | Highly customizable | Limited customization | Moderately customizable |

| Deployment Time | Weeks to months | Minutes | Days to weeks |

| Maintenance | User-maintained | Provider managed | User-maintained |

| Vector Database Integration | Frequent failures | Stable integration | Complex to set up |

| Complexity | O(n^2) operational overhead | O(1) client interaction | O(nlog(n)) scaling issues |

| Data Privacy | High control | Depends on API | Moderate control |

Senior Engineers should be ripping apart the existing models, examining their limitations, and prototyping new architectures that don’t keel over as soon as they encounter real-world data scales. You need to leverage advancements in both computation power and modern algorithmic efficiency. Focus on optimizing data pipelines, reduce latency, and abandon bloat. Prioritize integrating more intelligent resource allocation methods and reducing overhead. Anything less, and you’re just flushing engineering hours down the drain.”