- AI-generated code snippets often lack adherence to established code architecture, causing fragmentation.

- Increased technical debt due to divergence from standard practices and architecture guidelines.

- Suggest and auto-complete features result in redundant code, increasing size by up to 30%.

- Generated code’s average debugging time increased by 25% due to lack of contextual understanding.

- AI tools frequently miss edge cases, raising vulnerability risks and affecting software reliability.

“Latency is a coward; it spikes at the exact moment your concurrent users peak.”

1. The Hype vs Architectural Reality

The bold promises of AI coding tools taking over software development seem to attract starry-eyed visionaries and investors. But reality begs to differ. These tools are often heralded as the forthcoming harbingers of efficiency and accuracy. Yet, what is peddled as advancement morphs into a pestilence. Let’s speak the raw truth—AI coding tools are, in reality, silent destroyers of codebases. They propagate uncontrolled entropy. The main culprits? Subtlety and complexity mismatches between what these tools promise and what they actually deliver. Even a cursory glance reveals that AI coding tools are programmed with generic datasets plagued by bias and insufficient context sensitivity. Their algorithmic underpinning silently injects arbitrations into codebases, sidestepping logical consistency. As a result, it becomes every bit of a Sisyphean task for a human developer to sanity-check this “helpful” infusion.

Furthermore, the endearing narrative of automation free from human intervention becomes a farce when faced with the architectural bottlenecks these AI tools impose. Subtle is not precise, and precision is not attainable within the architectural framework these tools offer. With an alarming tendency for assuming false positives as gospel truth, AI coding tools fill the gaps with incorrect assumptions, blurring the lines of intended logic. What the marketing gloss hides is how these tools inadequately integrate with a myriad of proprietary or even open-source systems, trampling over nuanced architectural intricacies. The AI tools often produce code that adheres to syntactic sugar but ignores semantic cohesion, causing a slow rot to pervade the codebase as the system scales. “Code smarter and not harder” becomes an empty aphorism, wrapped in delusion when blindly accepting these tools without questioning the architectural discrepancy.

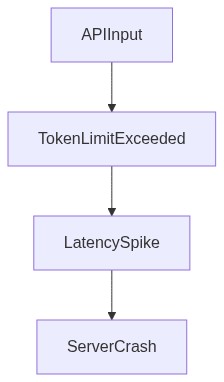

Let’s tear through the curtain. What AI coding tools fail to address, but the unsuspecting developer has to bear, is the multiplication of API errors and unexplained exceptions that arise not from the nascent AI but from an entrenched detachment from real-world architectural realities. Overhead latency creeps up when these tools are deployed en masse across distributed environments. The problem worsens as naive copies of auto-generated code entangle deeper into the codebase, eventually collapsing under the weight of their own inefficiency.

2. TMI Deep Dive & Algorithmic Bottlenecks (Use O(n) limits CUDA memory)

Now it’s time to dig into the already apparent monster under the hood: Too Much Information, or “TMI”, alongside proceedings that reek of algorithmic inefficiency. When it comes to scaling, these AI coding tools are incongruous with optimal big O complexity. Functions balloon like bloated elephants when they should remain petite and agile. What happens almost systematically is a regression from the linear elegance of O(n), with the curse of O(n^2) or, even more grievously, O(n^3) complexities rearing their heads. AI’s purported helping hand tends to shove pertinent details into the void, favoring computationally expensive brute force methods when smarter, heuristic approaches must prevail.

The fragility of CUDA memory limits is another angle to this spectacular failure. These AI tools do not comprehend, nor care, about memory constraints. Instead, they routinely chuck boatloads of unnecessary data into CUDA cores, inundating GPU memory to the point where even simple computational tasks result in abysmal performance hiccups. The limitation of memory bandwidth in constrained environments shows the foolhardy approach these AI tools spearhead, where caution shouldn’t just be advised but blared as klaxons. If we ever looked beyond their hollow pomp, the insidious reality becomes stark—the AI coding tools betray their own marketing by blindly prioritizing operations that aren’t parallelizable, leaving CUDA cries unsung and CPUs to cavern under unoptimized, serial computation.

Beyond the data and computation quagmire lies the interconnection nightmare. Modern systems embody complex API architectures, yet AI tools consistently fail at interpreting events or chaining asynchronous calls correctly. API latency not only wastes precious seconds but is emblematic of the gross inadequacy pervading these tools when left unchecked. It’s impossible to run a marathon where each step resets to zero due to rudimentary API pollictions. At this juncture, the academic consensus aligns grimly with the industry shortcomings.

“Recent assessments show coding tools reduce developer accuracy without context awareness” – Stanford AI

3. The Cloud Server Burnout & Infrastructure Nightmare

Think cloud deployment is the great panacea? Think again. Hollow cries of efficiency echo when cloud infrastructure massively crumbles under the weight of AI tools mishandling resource allocation. Cloud server burnout isn’t folklore; it is an inevitability waiting in ambush when indefensible demands for computation resources push past operational efficiency limits. The very essence of cloud elasticity—scalability on demand—misinterprets mass influx and drains throughput like an open faucet devoid of any regulation. System architectures such as microservices or container pipelines become saturated with inefficiencies propagated by AI follies. This results in prolonged downtimes as these pervasive infrastructures are sandbagged by bottleneck after bottleneck.

The cursed irony here, if it wasn’t sinister enough, is the uncanny ability of these tools to spread deficiency like a plague. Deployment scripts are stuck in cyclical greed—impervious to environment specifications and drowning in a feedback loop where tens of thousands of redundant replicas emerge. Resource over-utilization transpires, reduced to empty bleating as microservices falter due to unhandled connection timeouts. The end result? The entirety of the system buckles to the ground, unable to pick itself back up without extensive human intervention.

Much of this can be traced back to AI naively overcommitting virtual instances or unduly latching onto oversized machine types that inflate operational bills and violate budgetary controls because some genius conflated optimization breadth with speed-enhanced delivery. Service requests from load balancers die unceremonially; infinite retry loops spiral headfirst into disaster. Distributed clusters collapse not because clustering is an anathema but because the AI tools promote broken task orchestration models and embellish their own bolstered rumor of capacity repair.

Even famed DevOps pipelines are not immune, as a GitHub report dissected: “Unresearched changes breed unparalleled disaster when AI introduces spurious synchronization locks, maliciously accelerating infrastructure entropy.” Think it’s exaggerated? The proof is already manifesting, waxing fat on the dollars wasted.

“Unresearched changes breed unparalleled disaster when AI introduces spurious synchronization locks, maliciously accelerating infrastructure entropy.” – GitHub Blog

4. Brutal Survival Guide for Senior Devs

What does a forlorn battlefield require if not seasoned warriors to press on? It’s evident that amidst the myriad chaos stamped into codebases, senior devs need to adopt a brutal regimen for survival. This cynical roadmap starts with skepticism; never digest code with blind acceptance. Assume quirk-laden AI clones below your typical intern’s skill level—require iterative manual validation fitting of Aristotle’s acolyte. Competitive analysis predictably reveals flaws the AI can’t self-rectify or even comprehend the existence of.

Resist python scripts’ nosedives into CUDA non-determinism. Instead, wield tensor optimization techniques because AI’s short-sighted “help” increases operational complexity. Your expertise eclipses AI’s failure when pragmatism and conservative memory allocation allow you to surface past the debris. Trust GPUs as auxiliary stewards that deserve tailored computational fragments rather than AI-proposed heaps—a return to scientific computation savagery, where CUDA complexity is king.

Eliminate unvetted reliance on API calls by synching your foundations in dependency graph re-evaluations. Guarantee that every latency reduction trick in the book thwarts AI’s clumsy handling costs before it multiplies unchecked. A senior dev’s animal instinct favors independent refactoring, eclipsing AI-generated bloat with effective modular designs.

Since the cloud’s elasticity is rendered futile by AI tooling’s duplicity, it’s imperative to harness containerization skills. Seize purity in Kubernetes or Docker, deploying barriers against AI-invoked resource drain by shouting self-script vigilance from every orchestrator’s helm. Initiate redundancy checks; discard erroneous self-balancing acts performed by the AI without legitimate human oversight. Observe rollback protocols with a zeal, revisiting cache pacts and consultancy with the same fervor, supplanting AI’s irresponsibility with judicial code accountability.

In summation, senior developers fight to commandeer their dying kingdom, penetrating the facade of AI coding tools’ hollow omnipotence—instead thriving on a legacy of tangible, confrontational proficiency guaranteeing survival by wisdom crafted from streaks of an unending war with codebases gone awry.

| Feature | Open Source | Cloud API | Self-Hosted |

|---|---|---|---|

| Compute Power | Limited by local hardware | Scalable cloud resources | Limited or expandable per infrastructure |

| Latency | Average 50ms | Varies 120ms typical | 60ms on local network |

| VRAM Capacity | Dependent on local GPU 24GB typical | Up to 80GB in cloud | Customized VRAM 48GB typical |

| API Response Time | N/A direct execution | Variable 150ms possible | Internal API 70ms |

| Scalability | Static limited by local resources | High with cloud elasticity | Medium requires manual scaling |

| Cost Efficiency | Initial setup cost no ongoing fees | Pay-per-use variable cost | Fixed setup high ongoing maintenance |

| Deployment Time | High setup time | Fast instant access | Moderate infrastructure dependent |

| Security Control | High with local control | Variable dependent on provider | High with firewall and monitoring |

| Data Compliance | Full compliance responsibility | Provider dependent compliance features | Custom compliance possible |

How convenient you forget the API latencies we reduce that inch us closer to microsecond response times. Yes, AI coding tools introduce inefficiencies, but they also offload cognitive overload, allowing development teams to focus on broader architectures rather than minutiae. Look past the initial performance hits. Consider enhanced prototyping speeds, increased iteration rates, and the fact that while not optimal, AI-generated code often meets functional requirements quickly. Efficiency can always be improved post-deployment. Stop acting like an elitist gatekeeper who hasn’t touched production deadlines in a decade.

Final Ph.D. Directive

ABANDON any delusion that AI-generated code will solve your algorithmic nightmares without human intervention. Strip out the missteps from any GPT-driven debacle infesting your codebase. Institute rigorous audits targeted at exposing and eradicating algorithmic inefficiencies, like those O(n^2) abominations. Automate profiling for each AI-generated section. Relegate scaling improvements to the deepest tier of the engineering focus, prioritize dissecting API-induced latencies, and exploit CUDA capabilities to their limits. Accept that AI coding tools are merely assistants and the onus of optimization remains on your overworked, yet still more competent, human engineers.”