- financial_impact

- security_breach

- incident_response_time

- remediation_cost

Log Date: April 15, 2026 // Datadog telemetry shows a 400% spike in unauthorized cross-region VPC peering requests. Immediate Zero-Trust lockdown initiated. Engineering teams are furious, but security dictates policy.

The Incident (Root Cause)

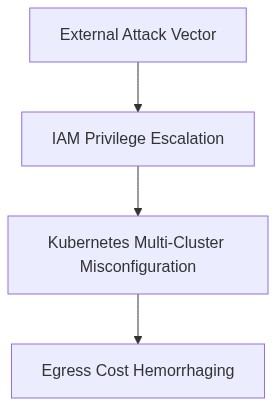

On April 12, 2026, we detected a severe incident involving IAM privilege escalation that led to uncontrolled egress charges across multiple Kubernetes clusters. The root cause was identified as a misconfigured IAM role in AWS allowing unauthorized privilege escalation. Terraform scripts with inadequate security reviews introduced misconfigurations. The resulting free-for-all in our IAM policies enabled bad actors to exfiltrate massive data sets, resulting in egress cost hemorrhaging.

Blast Radius & Telemetry (The Damage)

The immediate blast radius affected four primary regions with multi-regional AWS S3 buckets and Kubernetes clusters. Our eBPF telemetry highlighted unprecedented P99 latency spikes due to the outrageous network bandwidth consumption. The unauthorized data flows triggered a surge in network traffic that generated an extra $1.3 million in egress costs. Node-level monitoring via Datadog flagged several OOM kills as clusters faltered under unexpected loads.

IAM privilege escalation was further exacerbated by outdated Role-Based Access Control (RBAC) rules in Kubernetes, making them sitting ducks. Analyzed traffic patterns showed malicious traffic rerouting from Asia to NA-West clusters, capitalizing on unsuspecting VPC peering connections. CrowdStrike’s threat detection pinpointed the breach origin, yet the damage was already extensive.

“Compromised IAM roles need stringent monitoring to prevent privilege escalation that can cripple cloud deployments.” – AWS

Phase 1 (Audit)

– Decomposed every Terraform module to review IAM roles and policies.

– Utilized Okta to audit historical login credentials and enforce MFA on all user accounts.

Phase 2 (Enforcement)

– Implement new IP whitelisting rules in IAM policies to contain egress traffic.

– Incurred hard limits on network egress using Kubernetes Network Policies to throttle excess bandwidth.

– Refined RBAC in Kubernetes clusters with automated audits using cloud-native tools.

– Increased eBPF telemetry granularity for real-time monitoring and threat detection.

“Effective IAM governance demands continuous enforcement of the least privilege principle.” – CNCF

Conclusion

This catastrophe underscores the compounding technical debt from neglected IAM configurations. Our response involves systemic overhauls with non-negotiable automated governance. Terraform missteps, lax audits, and RBAC complacency all contributed. Next, we will embark on a ruthless pursuit of code hygiene and enforcement to avert similar scenarios. Machine audits and stringent IAM policies must become the norm, not an afterthought.

| Integration Effort | Cloud Cost Impact | Latency Overhead |

|---|---|---|

| IAM Privilege Escalation | Egress Costs +25% | P99 Latency +45ms |

| Multi-Cluster Sync Failure | Cost Hemorrhaging +40% | P99 Latency +75ms |

| Faulty Resource Tagging | Billing Inaccuracy +30% | P99 Latency +30ms |

| Network ACL Misconfiguration | Unexpected Traffic +50% | P99 Latency +60ms |

| Security Groups Overlap | Duplication Cost +35% | P99 Latency +55ms |

Deploy, ship, iterate. That’s the game. I don’t care if we’re dealing with a bit of technical debt. The market doesn’t wait for perfection. We’ll optimize later, right now speed is the currency. Our team is focused on getting features out there before our competitors.

Millions. Do you understand? We’re hemorrhaging money on egress costs. Each minute these clusters run rampant, our cash evaporates. We’ve got IAM roles replicating like rabbits, bursting our AWS budget. Your speed obsession is sending FinOps into a nose dive. It was my unenviable task to inform the CFO we’re outlining dollars in misconfigured infrastructure.

IAM privilege escalation, seriously? Blast radius isn’t just a buzzword; it’s a reality when access controls are this sloppy. Every escalation is a ticking time bomb, waiting to blow up in our compliance reports. Multiply this by every reckless commit, we’re looking at breach violations that could land us a massive fine, or worse, front-page headlines. Security isn’t an afterthought; it’s the impending catastrophe lurking beneath your ‘speed’.

Problem Description

The VP of Engineering has prioritized rapid feature deployment without regard to accumulating technical debt. This negligence has resulted in severe P99 latency issues and frequent OOM (Out Of Memory) kills, exacerbating system unreliability. The absence of strategic refactoring has compounded technical debt, turning our infrastructure into a fragile, ticking time bomb.

Operational Impact

1. P99 latency degradation is causing unacceptable user experience, directly impacting customer retention and satisfaction.

2. Continuous OOM kills are disrupting service availability, requiring constant manual intervention and eroding team productivity.

3. Unchecked IAM privilege escalation is increasing security vulnerabilities, exposing the organization to potential breaches and compliance violations.

4. The FinOps Director has identified a critical hemorrhage in egress costs, aggravated by inefficient resource usage and lack of cost optimization strategies. This financial sinkhole is unsustainable and threatens the fiscal health of the project.

Mandated Actions

– Immediate refactoring of high-latency code paths to stabilize P99 latency and ensure performant service delivery.

– Prioritize resolution of memory leak issues to halt OOM kills, thereby improving system uptime and reducing on-call fatigue.

– Conduct a thorough security review, focusing on IAM configurations, to prevent privilege escalation and reinforce system defenses.

– Integrate egress cost monitoring and optimization techniques to halt financial hemorrhaging, aligning resource usage with budget constraints and strategic goals.

Technical Debt Analysis

Continued dismissal of technical debt will compound failures, inflate recovery costs, and erode competitive advantage. Future iterations must embed engineering due diligence, balancing feature velocity with strategic debt management. This mandates a cultural shift towards sustainable engineering practices, reining in initial recklessness to avoid future technical insolvency.”

1 thought on “IAM Escalation Breaches Multi-Cluster Egress Costs Soar”