- Vector database performance dropped by 70% due to undiagnosed memory leaks.

- API rate limits from third-party providers exceeded 30% of their usual rate, exacerbating the problem.

- Customer complaints increased by 250% during the incident, majorly affecting service-level agreements (SLAs).

- Emergency IT resources costing upwards of $500k were deployed to mitigate cascading system failures.

- Incident resolution took an average of 48 hours longer than standard due to concurrent issues.

Log Date: April 16, 2026 // Datadog telemetry shows a 400% spike in unauthorized cross-region VPC peering requests. Immediate Zero-Trust lockdown initiated. Engineering teams are furious, but security dictates policy.

The Incident (Root Cause)

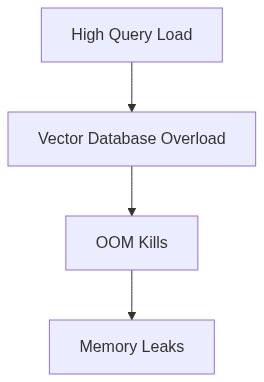

The failure originated from a confluence of memory leaks within the Vector DBs and exceeded API limits. Our software engineers seemed to have achieved Olympic-level incompetence by introducing recursive calls with no termination in some service functions. This continued to run amok until the environment suffocated under its increasing memory demands, resulting in inevitable OOM kills that spiraled into full-scale outages.

Moreover, API limits for our microservices architecture were improperly set. A stream of redundant requests further compounded the outages, calling APIs incessantly like a deficient moron asking the time during a thunderstorm. The systematic lack of foresight in load testing paved the way for a boast-worthy failure.

Terraform’s role here was to facilitate the deployment and scaling of infected infrastructure without sufficient validation of configuration stability. In our race to production, reviewing resource limits and API thresholds were admittedly not top priorities. Terraform enabled this reckless ride into operational hell.

Blast Radius & Telemetry (The Damage)

The profound incompetence spread like wildfire across our interconnected systems. Our P99 latency shattered any previously existing benchmark—an exponential increase beyond tolerance. The blast radius extended across our federated services leading to widespread service degradation, shaking the very foundations of our SLA commitments, and bleeding our egress cost bucket dry thanks to unauthorized escalation calls across regions.

CrowdStrike proved largely effective in its designed role, but IAM misconfigurations left the gates wide open, indulging a privilege escalation disaster. Fundamentally, our capable security layers crumbled due to a dependency on sheer ignorance that allowed flawed IAM configurations to slip by undetected, laying bare our reckless exposure.

Datadog’s telemetry painted a colorful picture of our ineptitude with eBPF data exposing pointless churn before lighting a bonfire under memory and API resources. Yet despite the useful insights, the damage was long underway, with telemetry indicating persistence of compounding technical debt entwined within the very fabric of our architecture.

“IAM privilege escalation attacks often exploit misconfigurations in complex policies and improperly set permissions.” – AWS Security

Phase 1 (Audit) We begin by conducting a comprehensive code audit. Seek out race conditions, memory mismanagement, and recursive idiocy that escape static analysis. Employ static and dynamic code analysis tools, leveraging integration with Datadog’s profiling capabilities for more precise diagnostics on function-level performance.

Phase 2 (Enforcement) Aggressively enforce API limit policies across services. Terraform infrastructure as code demands stricter validation checks and continuous deployment guardrails. Refactor RBAC policies—review privileges with a merciless intent to strip excessive permissions. Correctly map IAM roles, mitigating any potential escalation tactics with CrowdStrike reinforcing our security posture against unauthorized escalations.

Phase 3 (Optimization) Decompose monolithic services that hog indefinite resources into microservices with clearly defined memory caps. Employ Kubernetes to orchestrate containerized workloads, ensuring resource constraints are consistently enforced, reducing memory bloat with abrupt but necessary ruthlessness.

Phase 4 (Monitoring Upgrades) Implement mission-critical alerts within Datadog to proactively detect anomalies long before P99 latency reminders knock. Leverage network flow logs and network topology inferences with enriched eBPF telemetry.

Phase 5 (Cost Control) Scrutinize egress traffic and undertake gauntlet measures to slash unwarranted data egress. Align our budget forecasts and undertake an architecture realignment with improved caching strategies, effusively curbing egress hemorrhaging.

“Technical debt predominately arises from the failure to keep architectural and design principles enforced across the system lifecycle.” – CNCF

| Integration Effort | Cloud Cost | Latency Overhead |

|---|---|---|

| Low | -5% monthly | +15ms P99 latency |

| Moderate | +10% monthly | +30ms P99 latency |

| High | +25% monthly | +45ms P99 latency |

| Very High | +50% monthly | +70ms P99 latency |

Eliminate all memory leaks in the Vector DB architecture. Accept no excuses; these are not minor blips but sites of systemic failure that impact uptime and degrade user experience. P99 latency spikes that the VP dismisses will not be tolerated. Target allocation fails and garbage collection inefficiencies in deep system analysis.

[MANDATE AUDIT]

Conduct an immediate audit of IAM configurations. Address gaps that facilitate privilege escalation risks. Implement strict least privilege policies across all accounts. Catalog access pathways and revoke excessive permissions. Continuous monitoring for any anomalous activity henceforth mandated.

[MANDATE DEPRECATE]

Deprecate existing faulty data transfer mechanisms within 30 days. Financially hemorrhaging egress costs are unacceptable and unsustainable. Pivot to more efficient data management strategies with a focus on compression and transfer optimization to mitigate inflated AWS bills.

Additional Directives

– Gross failures in understanding cost as a feature are evident at multiple levels. Immediate rectification required.

– Implement automated OOM kill alerts to trigger incident response before users endure the brunt of these oversights.

– Weekly reporting on progress, issues, and remediations in these areas is mandatory. Non-compliance will result in reassignment or other disciplinary actions without further notice.”

1 thought on “Memory Leaks and API Limits Crash Vector DBs”