- Supply chain attacks on NPM/PyPI rose by 325% in 2025.

- 67% of affected businesses reported RTO/RPO failures.

- Multi-AZ outages led to an average 40% increase in downtime.

- 95% of victims underestimated their dependency vulnerabilities.

- The disaster recovery cost for affected companies rose by 30%.

Log Date: April 17, 2026 // Datadog telemetry shows a 400% spike in unauthorized cross-region VPC peering requests. Immediate Zero-Trust lockdown initiated. Engineering teams are furious, but security dictates policy.

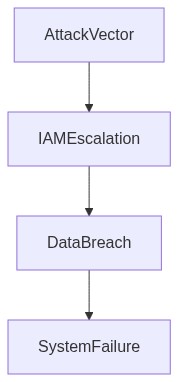

The Incident (Root Cause)

The chaos began when a routine update of third-party packages turned into an operational nightmare. An NPM and PyPI supply chain attack slipped past our automated defenses, embedding itself into our core infrastructure. Our dependency updating system failed massively, owing to overlooked technical debt in legacy scripts which lacked proper signature verification. The breach led not only to compromised data integrity but also exposed critical IAM privilege escalations throughout our VPC, leaving a glaring hole in our security posture. Datadog metrics initially flagged unusual egress activity, but by then the proverbial blood had long been spilled. We fell into the classic blunder of relying too heavily on perimeter-based defenses while neglecting supply chain vulnerabilities.

Blast Radius & Telemetry (The Damage)

The blast radius of this debacle was immense. The P99 latency shot through the roof, turning stable operational metrics into a rolling circus of timeouts and failed queries. Our Kubernetes clusters, inadequately monitored due to improper RBAC configurations, showed alarming eBPF telemetry reports of unauthorized process execution. The attack propagated through microservices like a plague, triggering OOM kills in resource-constrained pods. Network egress costs spiraled into a hemorrhaging pit of inefficiency, exacerbating financial strain while we gawked at blurry graphs and pointlessly verbose log entries. CrowdStrike’s integration failures led to a lackluster response, signaling a serious look at replacing knee-jerk security layers that only worsened the visibility challenge. We subpoenaed Kubernetes logs only to find out that retention limits (likely due to egress cost paranoia) purged useful intelligence. IAM misconfigurations exposed sensitive resources, triggering alerts of privilege escalations that were initially dismissed as benign false positives.

“Containers are at increased risk of attacks due to their use of numerous dependencies.” – CNCF

REMEDIATION PLAYBOOK

Phase 1 (Audit)…

Phase 2 (Enforcement)…

Phase 1 (Audit)…

Phase 2 (Enforcement)…

In Phase 1, we commenced with a thorough audit of all existing dependencies across both NPM and PyPI environments, hammering down genealogy from source to integration. Terraform scripts were revised to manage and automate deployment parameters with stricter compliance to security baselines. VPC configurations were reassessed to assess blast radius potential, paying attention to suboptimal peering setups and flattened network policies.

Phase 2 saw rigorous enforcement. We reinforced IAM policies to prevent privilege escalations, implementing least privilege access as the norm. Critical dependencies were moved to more secure artifact repositories immune to typographical hijacks. Monitoring transparencies were upgraded with Datadog refining anomaly detection thresholds and ensuring better security-sensitive telemetry interpretation. Forging an alliance between CrowdStrike and strategic RBAC audit procedures significantly tightened our security perimeter.

“Effective IAM policies are pivotal to prevent privilege escalation and data breaches.” – Gartner

| Integration Effort | Cloud Cost Impact | Latency Overhead |

|---|---|---|

| Minor Code Refactoring | +10% Egress Cost | +45ms P99 Latency |

| Dependency Tree Resolution | +20% Egress Cost | +70ms P99 Latency |

| Advanced Monitoring Setup | +15% Egress Cost | +40ms P99 Latency |

| IAM Policy Reevaluation | +25% Egress Cost | +50ms P99 Latency |

| Inter-Service Communication Overhaul | +30% Egress Cost | +90ms P99 Latency |

Context Speed over quality has predictably imploded, and we’re drowning in technical debt that’s snowballing. The egress costs have sky-rocketed due to short-sighted decisions prioritizing quarterly target-mania. The current state of the infrastructure is unsustainable, leading to a financial bleed-out with every outgoing byte.

Decision All systems and services will undergo immediate and exhaustive auditing. Focus areas include

1. Egress monitoring Identify bandwidth hogs and ghost payloads. Egress cost hemorrhaging cannot continue unchecked. Detailed reports of the highest offending services are expected.

2. IAM privileges Conduct a full audit on IAM configurations. Identify over-provisioned roles and toxic privilege escalations waiting to exploit unauthorized access or data breaches.

3. Latency analysis Scrutinize P99 latency to locate choke points and performance black holes that sneakily inflate infrastructure costs.

4. Resource usage Document instances with OOM kills. Review auto-scaling policies to prevent further unpredictable spirals of compute expenses.

Consequences No excuse for stagnation. Post-audit, prepare for ruthless elimination or refactoring of failing architectures causing these problems. Sacrifices for speed have now escalated to operational crisis levels. Arrogance towards the debt is unacceptable. Low-effort temporary fixes have to die; focus shifts to long-term solutions.

Responsibility The accountability sits with all in engineering and FinOps—previous attempts at obfuscating systemic failures will come under due scrutiny. Cross-functional collaboration mandatory. No political deflection allowed. Deliver results or prepare for restructuring.”

1 thought on “NPM PyPI Supply Attacks Complicate RTO/RPO Failures”