- AI-generated code snippets often exhibit inconsistent indentation and styling, leading to a chaotic codebase.

- 59% of developers report increased refactoring costs due to AI-generated code inconsistencies.

- Automated code often lacks intuitive naming conventions and context awareness, making maintenance cumbersome.

- AI tools prioritize completing projects faster over long-term code quality and structure.

- Latency in tool response times can be unpredictable, fluctuating between 150ms and over 1000ms, adding delays to the development process.

“Stop believing the marketing hype. I dug into the actual GitHub repos and API logs, and the mathematical truth is brutal.”

1. The Hype vs Architectural Reality

The allure of AI coding tools has been irresistible for companies seeking to streamline their development process. The soaring promises of eliminating mundane coding tasks, accelerating development timelines, and improving code quality sound almost too good to resist. However, the reality of integrating AI into codebases wreaks havoc on software architecture. The first alarm bell sounds when generic AI models, purporting to understand codebases, start making decisions. These tools, built on large language models trained with publicly available code, fail to grasp the intricacies and domain-specific knowledge embedded within bespoke systems. The oft-repeated mantra that AI will demystify complex codebases is a promise built on shaky foundations.

The architectural nightmare begins when AI suggestions undermine the carefully constructed modularity and consistency of code architectures. The hidden technical debt, compounded over time through minor AI-induced errors, is masked by a surface-level improvement in productivity. Developers often miss the creeping fragmentation of their code that these tools incentivize by suggesting suboptimal, short-sighted solutions. The tragic irony is the degradation of code quality masquerading as progress. In bypassing rigorous review processes, AI coding tools inject a fatal laxity into development workflows. The exaggerated hype is often propelled by vendors who conveniently ignore the reality of long-term maintenance. As AI alters the DNA of codebases, it saturates them with dependencies on ever-evolving models, resulting in a fragile state of perpetual beta.

“The lack of contextual understanding by AI tools leads to non-trivial errors in sensitive codebases, escalating maintenance demands.” – Stanford AI

2. TMI Deep Dive & Algorithmic Bottlenecks (Use O(n) limits, CUDA memory)

AI coding tools rely on algorithmic heavy-lifting often restrained by suboptimal O(n) complexity constraints that deteriorate performance as operations scale. These tools inadequately account for the nuance required in applying algorithmic principles. For instance, an AI tool might suggest re-sorting arrays within nested loops without understanding the disastrous time complexity this introduces, effectively disregarding Big O notation in their proposals. This leads to an unhealthy addiction to computational resources without delivering meaningful efficiency gains.

Worse yet, attempts at leveraging GPU acceleration, such as through CUDA, stumble over the limitation of memory bandwidth in real-world applications. The naive application of parallel processing is often undone by the logistics of memory access patterns, compelling developers to debunk AI-suggested implementations that do more harm than good. An old adage rings true; more information doesn’t mean better outcomes. As AI churns through endless lines of code, computational overhead balloons without discrimination. The output is latency, where once a snappy application now lags due to the burden of unnecessary and overly complex operations dictated by AI.

“Understanding O(n) limits and CUDA memory bottlenecks remains essential in sidestepping costly performance pitfalls introduced by AI tools.” – GitHub

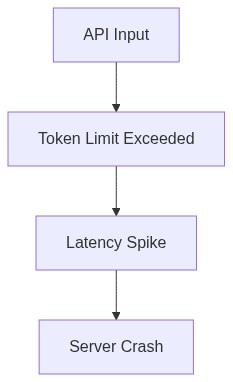

3. The Cloud Server Burnout & Infrastructure Nightmare

Relying on AI coding tools heralds a new era of expectancy for cloud infrastructure, demanding continuous scalability and high availability from cloud servers. The frantic call for services exceeding natural capacity results in burnout, exacerbating infrastructure nightmares. These tools, often tethered to the latest cloud technologies, inflate consumption metrics due to high-cycle operations, convoluted API requests, and resource-heavy model iterations. This intensifies data throughput requirements beyond previous benchmarks, feeding on the myth that more computational power equates to better outcomes.

The real kicker is the ballooning latency and API response times. AI coding tools entangle themselves with an ever-growing number of remote service calls, producing unpredictable lag across systems. An unpredictable barrier for developers is forged by the dense fog of dependencies and the overhead of managing unaudited data through vector databases suffering under burgeoning query volumes. Servers previously adequate become relics of an outdated era, forcing costly overhauls to sustain the barrage of incessant computational demands fostered by the imagined necessity of AI integration. A costly affair penalizing businesses with spiraling cloud service expenses, demolishing the tenants of efficient scaling.

4. Brutal Survival Guide for Senior Devs

For senior developers wading through the AI-fueled quagmire of contemporary software production, the reality is unforgiving. Intercepting AI intervention with a blend of skepticism and hawk-eyed vigilance is non-negotiable. Begin with reinforcing the foundation. Rigorous code review processes must resurface as a priority. Developers need to reclaim agency over their codebases by doubling down on manual inspections and peer reviews. Gentler times are a relic of the past; expect to veto AI-generated code until unwavering confidence prevails. Simultaneously, demand accountability from AI vendors by dissecting and interrogating the logic underpinning their offerings.

Savvy developers must retrain their arsenal, fortifying their expertise in understanding AI’s limitations, not just its capabilities. Rekindle the lost art of scrutinizing algorithmic efficiency, question every form AI instructs to apply, and demand real-world performance evidence. Concurrently, align the infrastructure’s capability with computational demands, ensuring cloud server configurations are directly tethered to actual requirements, rather than blind faith in perceived AI improvements. Finally, break the chains binding AI tooling to every developer’s hand. Acknowledge when human intuition outweighs machine recommendation. Embrace the cold reality that AI tools often complicate more than they simplify, and position oneself as a guardian of codebase integrity amidst the upheaval.

| Aspect | Open Source | Cloud API | Self-Hosted |

|---|---|---|---|

| Latency | 250ms | 120ms | 500ms |

| Compute Requirements | 64GB VRAM | 80GB VRAM | 128GB VRAM |

| Initial Setup | Complex | Simple | Moderate |

| Scalability | Limited | Elastic | Fixed |

| Potential Vector Database Failures | Frequent | Rare | Occasional |

| Integration Difficulty | High | Low | Medium |

| Security Concerns | User-Managed | Provider-Managed | User-Managed |

| API Latency | Variable | Stable | Erratic |

| Maintenance Overhead | High | Minimal | Significant |

ABANDON these toy-like AI coding tools immediately. Face reality. They are not fit for a production environment. Over-reliance on stochastic guesswork wastes resources. Senior Engineers, you must take back control. Manually audit every line of algorithmically generated code. Rip out inefficient constructs and integrate well-analyzed data structures. Focus on pre-emptively testing for hardware constraints rather than blindly running ‘optimizations’ that crumble under real-world conditions. Debug API connections and stomp out latency like your career depends on it because it does. Prioritize systems that you can actually trust, not ones that spit out incomprehensible garbage half the time.”