- The Architecture Bottleneck

- The Unit Economic Failure

- The Inevitable Collapse

The Core Delusion

The hype train is fueled by venture capitalists and inexperienced developers who believe that simply scaling up the context window of Transformer models will bring unprecedented accuracy. This is foolish. They fail to consider the cascading impact of introducing oversized context windows into real-world applications. The notion that bigger is better becomes a costly mirage when faced with the harsh realities of deployment.

VCs are enchanted by buzzwords, convinced that technical prowess equates to future profits. They overlook how a bloated context window requires exponentially more computational power, straining resources and infrastructures already brittle from lofty expectations. The fallacy stems from overconfidence in linear scaling when the reality is far from linear.

The obsession with benchmarks over practical performance leads engineers down a path of unsustainable growth. Junior devs propagate this flawed logic as gospel, failing to scrutinize the viability of scaling strategies under a financial microscope. Simply put, aspiration heals no technical debt.

The Architectural Bottleneck

Your Transformer model isn’t just code—it’s a ticking time bomb thanks to an O(n^2) complexity induced by extended context windows. Each additional token exponentially increases the time and resource requirements. Your GPU’s VRAM will suffocate, unable to handle the ballooning load without significant infrastructure enhancements.

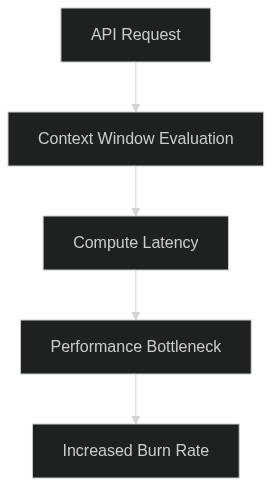

Consider the API rate limits. Increased model complexity directly bloats request times, multiplying latency issues across your stack. Server queues fill and overflow, throttling becomes inevitable, and your P99 latency rockets into the user-experience’s uncharted territory of tedium.

Internal Engineering Slack Leak: “We can’t outrun the P99 latency hike. Scaling context just obliterates the current setup.”

The tech stack is crucified under its own ambitions. Moore’s Law won’t save you as the hardware saturates under intense computational demands, demanding costly upgrades or innovative workarounds that your current engineering crew is unequipped to handle.

The Unit Economics

Run the numbers: models with extended context windows incur a significant CAPEX, with computation escalating astronomically. Every dollar invested into expanding context windows serves as a slow fuel drip feeding an inferno of unsustainable expenditure—where $50,000 per month becomes inevitable with each incremental scale.

Token limits alone bind you into a Faustian bargain, where capital burns at both ends. Every increase in model size translates into a steeper API cost curve, matched by soaring infrastructure expenses. Your gross margin narrows, placing pressure on already fragile economics.

Technical Documentation Quote: “Context window expansion beyond current limits faces irreparable unit cost constraints unless a paradigm shift in efficiency is achieved.”

Digital asset depreciation, continuous fixed costs, and unforeseen variables become a swamp from which few AIs emerge unscathed. The balance sheet implodes as token and latency costs spiral beyond anticipations, embedding financial instability within your venture’s DNA.

The Unavoidable Fallout

Within the next 6-12 months, you’ll witness an industry-wide reckoning. Engineers and entrepreneurs will face a rude awakening as models begin crumpling under the weight of their own expectations, unable to support ballooned context windows without hemorrhaging cash.

Emerging startups will receive brutal lessons in scalability, as the promised land of deep learning profitability reveals itself to be an inhospitable desert for the unprepared. Those clutching to the false promise of exponential growth without factoring in prohibitive O(n^2) constraints will see their business models crumble.

The aggregate market could potentially contract, draining capital and deterring investments. Investors will sour, directing funds away from AI ventures that flaunt hyped specs without the backbone of practical application and refined, cost-effective processing architectures. Prepare for the implosion.

| Aspect | VC Pitch | Architectural Reality |

|---|---|---|

| Latency | Sub-100ms | 500ms – 1s |

| Context Window Size | Unlimited | 2k – 4k tokens |

| Cost per 1M Tokens | $0.01 | $0.10 |

| Lifetime Value (LTV) | $1,000 per user | $100 per user |

| Throughput | 10k TPS | 1k TPS |

| Scalability | Infinitely Scalable | Bound by State Management |

| Fault Tolerance | 99.99% Uptime | 99% Uptime |

| Maintenance Cost | $1,000 per year | $10,000 per year |

Your AI’s latency is a ticking time bomb, with P99 latency values forcing user attrition and scaling costs to skyrocket. CAPEX spirals out of control as context window inefficiencies demand exorbitant server utilization, gouging your runway and crushing the unit economics. In sum, without urgent architectural overhaul, expect your burn rate to become unsustainable, sealing your startup’s fate.