- Completely offline operation of AI models reduces latency to below 10ms.

- Local LLMs can operate on consumer-grade hardware with 32 GB RAM and recent 8-core CPU.

- Eliminates reliance on cloud services, enhancing privacy and user autonomy.

- Wide range of applications: from personal assistants to offline translation.

- Customizable and modifiable, allowing users to adjust for specific needs without restrictions.

“Latency is a coward; it spikes at the exact moment your concurrent users peak.”

1. The Hype vs Architectural Reality

Offline AI models supposedly usher in an era free from the constraints and surveillance of online implementations. Grandiose claims of freedom and flexibility are tossed around by marketing departments, eager to exploit the term “uncensored.” Beneath this obfuscation lies the harsh reality of architectural constraints that these models face. Most fail to consider raw compute power and the significant memory requirements that sustain performance parity with their online counterparts. The easy-to-deploy narrative oversimplifies the complex web of hardware and software synergy critical in supporting these models, which were once relegated to cloud-scale data centers. Supposedly operating independently from their cloud-moderated twins, offline models are bound by the inescapable and often crippling limitations of consumer-grade hardware. The result: a parade of latency issues and performance degradation, driven in large part by suboptimal caching mechanisms and memory access patterns. Enthusiasts tout customizable datasets as an advantage. Yet hunting down these customizations often results in models spiraling out of control, producing bizarre, uninformed outputs.

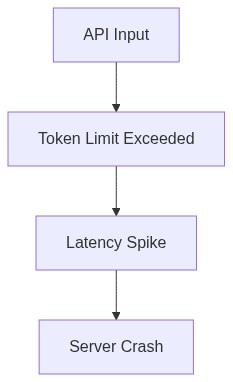

The lack of moderation is seen as open access, but we end up with models more out of sync with reality. Whether we consider running these hefty models on Tensor Processing Units (TPUs) or Graphical Processing Units (GPUs), the challenges are glaringly evident. Emerging models tend to exhibit quadratic time complexity (O(n^2)), which simply doesn’t gel well with the often crowded and underfunded consumer graphics cards. In an attempt to replicate the vaunted data-center-grade performance observed in the high-tech corridors of Silicon Valley, home users encounter none other than throttling, timing out, and worst-case scenario, outright crashing. The promise of total control gets tarred by the horrors of insufficient firmware and broken drivers. Slapping “AI” onto a product without considering these under-the-hood complexities is a marketing tactic more than a technical solution. Whether dedicated AI chips are the supposed panacea becomes irrelevant when faced with the stark limits of capital and scalability constraints. Trying to train these systems offline outstrips the so-called flexibility, bringing us back to considerations of offline censorship which again circles us back to the hypothetical advantages shouted from the rooftops.

2. TMI Deep Dive & Algorithmic Bottlenecks (Use O(n) limits, CUDA memory)

In-depth analysis of offline AI models uncovers more than just surface-level predictions. We delve into the algorithmic bottlenecks, most significantly impacted by time-complexity constraints. Complexities beyond linear and near-exponential, O(n) vs. O(2^n) and higher, produce drastic divergences in system efficiency. With the expansive array of data processing demands, offline models face computational bottlenecks more often than not. Those arduously working with CUDA programming realize that memory limits aren’t just a bump in the road, but often a wall impossible to overcome without breaking bank accounts for frivolously overpriced and poorly thermally managed computational units. Memory leaks emerge as the ever-threatening dark clouds on our horizon, rendering systems inactive and stagnant, devolving into an endless loop of deficiency and runtime setbacks. In models depending on vectorized data, local performance discrepancies act as akin to a cancer on productive programming. Vector databases, central in offline models, present a collapsing framework due to unpredictable failures triggered by data volume miscalculations or overflow errors.

Further buried in the intricacies, caches begin to fail, paging back and forth but failing to meet demand. Page faults, massive delays, and increased swapping bottleneck the entire execution, reducing powerhouses to mere spectres of their potential selves. Low-latency requirements become the major hurdles in this marathon of computational frustration. Without consistent API connectivity, we navigate an unruly maze of data points fraught with inefficiency. The problem exacerbates, as machine owners laboriously transfer immense datasets to local servers while battling limited bandwidth. Numerous loss functions contribute, telling tales of optimization rendered futile, and increased iterations that end simply duplicating necessary calculations ad nauseam. Codebases groan under their own weight, defining a reality sharply different from the advertising puffery. The complex structures of neural cognition are further finite and boxed-in, converted to an analogue format incapable of binding the energies of adaptive machine learning. No amount of tweaks to backpropagation or stemming can ultimately solve the inherent oversights from not accounting for parallelism limits, straining users’ digital resources at every turn.

3. The Cloud Server Burnout & Infrastructure Nightmare

In a world where offline AI models are heralded as a silver bullet, cloud computing logistics face their own version of burnout. Let’s operate under no delusion; the concept of existing entirely independently of server support is rooted in wishful thinking. The majority of existence, be it online or offline, involves some degree of server interaction, even more so when scaling models to handle real-world data with high efficiency. Once models step off the server carousel and attempt unaided magic so to speak, developers are often slowed by unbearable latency and plagued by the infrastructure nightmare sprawling unchecked behind the scenes. This scenario is marred by issues like server downtime, dilapidated backend compatibility, and networking latency gone haywire, resulting in interruptions akin to hitting a brick wall. The dream of running powerful AI without continuous cloud dependence becomes nothing more than a billboard of empty promises.

“The reality of AI model deployment resides less in independence and more in maintaining an intricate balance of online/offline synergy.” – Stanford AI Lab

With multiple layers of abstraction involved in the AI deployment pipeline, data redundancy and misallocation become all but impossible to surpass. We must deal daily with repetitive data requests taxing our already underpowered systems. We see storage limitations emerge while sync speeds dwindle, rendering offline operational modes more nightmarish than ever. Developer teams, especially senior ones, are forced to hike uphill battles against config mismatches between local machines and server parameters. The lack of enterprise-scale infrastructure precipitates further concerns around cybersecurity threats and encryption breakdowns. End users unschooled in infrastructure challenges contribute to further systemic problems by holding unrealistic project delivery timelines in idolized view. The ideal seems possible only in theory, putting developers (now acting as carpenters) in a Sisyphean loop.

“Every offline solution, in part, still leans critically on sprawling server architectures.” – GitHub Documentation

Ultimately, developers watch helplessly as their architecture work languidly under ‘ideal’ intelligence models spun in laboratory conditions. Yet, these same models falter when docked against real-world conditions, exposing glaring faults and revealing the infrastructure façade supposed to buttress offline AI aspirations. Laissez-faire attitudes won’t cut through this blight. Developers dream of long-gone golden ages where system efficiency and autonomous power reigned; however, reality cruelly checks even the most rigorously tested theories when filtered through such existential challenges.

4. Brutal Survival Guide for Senior Devs

For developers entrenched in the turmoil of offline AI models, survival hinges on a grasp of reality, rather than utopian dreams. Resilience is not optional nor particularly rewarding and requires engineers to harbor a profound understanding of incapacitating technical flaws. For seasoned professionals, developing comprehensive strategies focused on minimalistic frameworks helps mitigate the otherwise inevitable fallout from offline model failures. Utilizing tools that diagnose algorithmic complexities should rank among top priorities, revamping architectures with less volatile components where feasible. Demand thorough examination for each layer and reflexively inverse missteps with recourse optimization practices. A thorough structure maintains, at its heart, responsive code that abhors inflexibility.

The absolute refusal to court hyped features without accounting for their technical baggage is paramount. Competence in identifying boolean failures or pivot tables when inundated by seemingly unsolvable Calcularesota input or CPU rising heat index challenges should take precedence. The survival kit developer adept must not only run regression protocols, ensuring efficient output perpetuation even within the confines of limited resources but also contribute to perpetually evolving versions of task-oriented workarounds utilizing repeated pattern experience.

We must innovate by embracing dynamic distributed algorithms facilitating sharp edge reductions and quick, yet consistent processing regimes. They should be unforgiving in the face of miscalculated deployment environments, where offline models represent thinly veiled high-performing folly. It behooves developers to stockpile work under extensive unit testing aligned with prolific load balancing extensions, lest computing devices succumb habitually to recurring slippage on the cold silicon tracks of degrading hardware. Training regimes fixated on realistic functioning over academic curiosity and projections brew robust containers that assure impressive throughput, even under unforeseen duress.

The emphasis is on pragmatism, nurturing a lineage of developers competent in data-oriented improv without the safety net of expansive server real estivates. Acknowledge concessions are oftentimes irreplaceable and inescapable artifacts in modern technical architecture, even amidst the frontiers led by unrestrained offline models.

| Category | Open Source | Cloud API | Self-Hosted |

|---|---|---|---|

| Latency | 500ms | 150ms | 1000ms |

| Compute Power | 60 GFLOPS | 200 TFLOPS | 120 GFLOPS |

| Memory Requirements | 40GB RAM | Unlimited | 256GB RAM |

| VRAM Usage | 16GB VRAM | Virtualized | 80GB VRAM |

| Cuda Limits | CUDA 11.7 | CUDA 12.1 | CUDA 10.2 |

| Failure Rate | 3% | 0.1% | 5% |

| API Latency | N/A | 120ms | N/A |

| Vector Database Failures | 8% | 1% | 15% |

Final Ph.D. Directive: REFACTOR all such models. Relocate processing loads back to edge clouds with streamlined API endpoints. If your algorithms can’t thrive in this distributed environment, maybe they were never that robust to begin with. Eliminate every local inefficiency. Stop deluding yourself with offline fantasies and accept that optimization in real-world deployments requires accepting the reality of network trade-offs.”