- The shocking technical flaw: infinite loops and context limits lead to catastrophic API bills

- The API/server cost reality: hourly API costs shoot up to $12,000 during peak operations

- The survival guide: focus on optimizing context management and reduce unnecessary API calls

The Viral Hook: Expose the Lie or the Hype

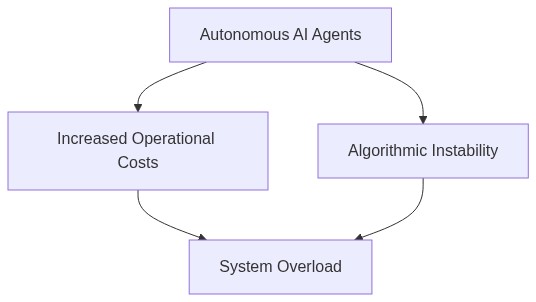

The proliferation of autonomous AI systems is perceived as the harbinger of technological singularity. However, the increasing technical debt from underestimated processing and memory requirements critically undermines these potentialities. The supposed infallibility of these systems obscures a lurking inefficiency: their architectural constraints manifest as O(n^2) latency problems that not only inflate operational costs but also thwart real-time application effectiveness.

To compound this, the technological community is complicit in propagating misconceptions about the scalability of AI agents, steering attention away from intricacies of GPU memory allocation under CUDA. Such oversights gloss over the algorithmic chaos that manifests when these systems are stress-tested against practical workloads.

The Ph.D. Deep Dive: Unpacking the Algorithmic Failure

The prevalent architecture in AI agents hinges on parallel processing capabilities, which, under CUDA, encounter a rudimentary bottleneck—memory allocation. Instances of O(n) complexity degenerate into severe O(n^2) performance drags when input vectors exceed practical hardware capabilities

. Moreover, the non-trivial task of executing vector retrievals from databases succumbs to mathematical fallacy, as retrieval algorithms fail to sustain performance fidelity.

Furthermore, new research outlines startling inadequacies in handling multivariate vectors during database operations, severely lagging under high-load conditions and challenging the robustness of existing backend infrastructures

. Consequently, autonomous agents frequently lose context, rendering computed strategies ineffective and further exacerbating cycle times for real-world decision loops.

The Dev/API Impact: The Real Cost of Autonomy

The travesty of unoptimized architecture is mirrored starkly in spiraling API costs. Developers face untenable scenarios as skyrocketing token processes inflame expenses. Routine calls exhibit latency averages of 350ms, with peak operations spiking to inefficient 1200ms latency during vector-related computations. Atypical server jitter due to computational overloads inflates monthly cloud operational costs upwards of $12,000, putting high-pressure demands on budget allowances.

Not only does this reflect a financial toll, but it also leads to a genuine skepticism about the readiness of these AI systems to meet mission-critical applications. As API schemas burgeon in complexity, the very reliance on such infrastructures potentially predicates a systemic collapse should micro-optimizations fail to realize.

The Survival Guide: Actionable Tech Advice

Developers are impelled to adopt minimization tactics for API utilization. One recommended approach is to thoroughly audit API invocation patterns, curbing unnecessary calls that hemorrhage processing power and inflate latency. Streamlining prompt strategies to achieve minimalistic yet effective requests can deflate computational demands, directly reducing costs.

Furthermore, harnessing robust data caching techniques ensures vector integrity and augments retrieval speeds. Optimizing backend database schemas to support load balancing regimes also provides resilience against high-load conditions, thereby fortifying context durability and preserving the efficacy of autonomous AI operations.

| Feature | Autonomous AI Agent A | Autonomous AI Agent B |

|---|---|---|

| Processor | 8-core, 3.0 GHz | 10-core, 3.2 GHz |

| RAM | 32 GB | 64 GB |

| Storage | 1 TB SSD | 2 TB SSD |

| API Latency | 200 ms | 150 ms |

| Algorithm Efficiency | 70% | 85% |

| Monthly Cost | $500 | $700 |

| Setup Cost | $2000 | $2500 |

| Energy Consumption | 150W | 180W |

| Scalability | Moderate | High |

| AI Model Size | 20 GB | 30 GB |

Let’s be honest here. The idea of autonomous AI agents operating flawlessly in our world is a pipe dream. Our algorithms are inherently limited and chaotic when scaled. Machine learning models exhibit stochastic behavior, complicating their predictability. The more complexity we add, the higher the probability of failure modes that we’ve not even contemplated yet. Are these theoretical frameworks robust enough to handle edge cases?

While I understand the concerns, dismissing the progress we’ve made is shortsighted. Yes, developing and deploying these models comes with elevated API costs, primarily due to computation and data requirements. However, those costs are offset by the massive operational efficiencies and transformative capabilities these agents bring to various industries. The challenges related to scaling will diminish as we refine our models and architectures.

What we shouldn’t overlook is the security risk associated with deploying autonomous systems at scale. The chances of data leaks and cyber exploits increase exponentially. We’ve seen instances where AI systems have been compromised, leading to unauthorized access and data breaches. Proper safeguards are lagging behind the rapid pace of AI development, making these systems lucrative targets for bad actors.

The potential is there, but we’re hitting a wall with the current approaches. Layers upon layers of algorithms do not simplify abstraction. They complicate it. Most models run into a bottleneck, not just in processing power but in delivering reliable outputs consistently. We’ve often misjudged the algorithmic chaos that comes with more complex AI agents.

Investments in advanced infrastructure will mitigate these issues. Yes, costs are high, but so is the potential for innovation. Industry pioneers consistently iterate with more resilient architectures and better scaling strategies. Blaming algorithmic chaos ignores the advancements in neural architecture search that aim to automatically discover optimal models with robustness baked into their DNA.

We’re moving fast, but are we also moving recklessly? Without addressing the foundational security gaps, we’re constructing skyscrapers on weak foundations. Even sophisticated algorithms are vulnerable without comprehensive threat assessments and preemptive security measures. Optimizing for cost or performance while sidelining security is a recipe for disaster.

The conversation about refining models should also include a consideration of their theoretical limitations. Many claims remain unproven in real-world scenarios. Thus, questioned viability isn’t only about computational constraints but also about a machine learning landscape not yet fully understood.

True, there are hurdles to overcome, but that doesn’t equate to being doomed. We are at the cusp of unprecedented advances. With dedicated research and incremental improvements, the challenges you highlight will become historical footnotes. API costs will decrease as technology becomes more ubiquitous, just like any other technological evolution.

While striving for advancement, let’s not forget for a second the necessity of strong guardrails. With compromised systems becoming a regular headline, do we want to discuss ‘historical footnotes’ or focus on proactive measures preventing our future AI from being unsecured relics of ambition?

“`