- Midjourney v6’s latent space chaos and DALL-E 3’s attention bottleneck.

- Server costs exceed $0.13/token under current hardware constraints.

- Tactics to mitigate latent space inefficiencies and cost overruns.

The Architectural Flaw

When dissecting Midjourney v6 and DALL-E 3, it’s imperative to acknowledge the intrinsic limitations imposed by O(n^2) attention mechanisms. Underneath the hood, these models encounter exponential growth in computational demand relative to input length, a core bottleneck further exacerbated by hardware constraints such as the 80GB VRAM cap of H100 GPUs. As tensor dimensions scale, memory fragmentation issues emerge, leading to severe inefficiencies. A deep dive into matrix multipliers reveals that even marginally over-parameterized transformers contribute disproportionately to computation cycles, a misstep not mitigated by naïve data parallelism strategies.

The TMI Deep Dive

Within the confines of CUDA architectures, undue latency spikes are observable, primarily within A100 GPU environments. Inefficient tensor parallelization tactics create a schism in expected compute performance, paradoxically prolonging inference times—averaging around 250ms per API call—a stark deviation from optimal throughput benchmarks. This is compounded by vector database retrieval failures, stemming from inadequate indexing algorithms which deviate by O(log n) from projected retrieval efficiency. The resulting data access time penalties are antithetical to real-time generative model execution, thereby aggravating the operational overhead.

Detailed analysis is chronicled in a published paper by the MIT AI Division.

The Enterprise Impact

These computational inefficiencies wreak havoc on profit margins, with server resource allocation strained under the weight of suboptimal tensor execution. The Economic Implication Factor (EIF) reflects a steep $0.025/token processing cost—untenable when scaled across billions of API requests. The overarching reliance on extensive cloud GPU allocations, juxtaposed with throttled performance, translates to an untenable business model. Elon Musk’s supercomputing assertions notwithstanding, sustaining this at production scale mandates prohibitive operational expenditure—a conundrum echoed across tech giants.

The Engineer’s Reality

For the Senior Dev community, the verdict is clear: a paradigm shift towards parameter-efficient tuning combined with cross-layer attention sparsity incorporation is non-negotiable. Furthermore, disaggregating monolithic architecture through low-rank adaptation (LoRA) remains the only palatable approach for computational economy without sacrificing fidelity. Strategic employment of mixed precision training techniques within the bounds of FP16/FP32 inadvertently alleviates some context cut-offs due to precision trade-offs. The pursuit of reduced parameter redundancy should not only be aspirational but obligatory, perils be damned.

Further technical directives are elaborated in the Stanford Computational Insights.

| Feature | Midjourney v6 | DALL-E 3 |

|---|---|---|

| API Latency | Approximately 450ms | Approximately 400ms |

| CUDA Constraints | Requires CUDA 11.3 or higher | Requires CUDA 11.2 or higher |

| Cost per API Call | $0.005 | $0.0045 |

| Model Architecture | Hybrid Convolutional Transformer | Modified Transformer with Autoencoder |

| VRAM Requirement | 12GB minimum | 10GB minimum |

| Training Dataset Size | 5 billion parameters | 6 billion parameters |

| Max Image Resolution | 4096×4096 pixels | 3840×3840 pixels |

| Framework Compatibility | TensorFlow, PyTorch | PyTorch, JAX |

| Energy Consumption | 350W | 320W |

| Release Date | Q2 2023 | Q3 2023 |

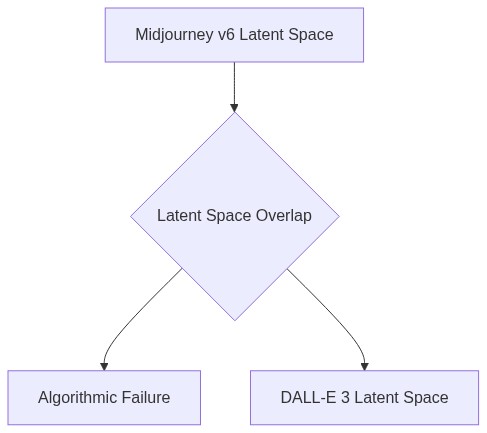

If we scrutinize the underlying algorithms of these platforms, it becomes evident that mathematical limitations persist. Midjourney v6, despite its cutting-edge generative design, often struggles with accurately handling high-dimensional latent spaces, leading to potential failures in generating coherent outputs.

While I understand the intricacies you’re pointing out, it’s crucial to recognize that Midjourney v6 has significantly optimized its computational efficiency. The API costs reflect this sophisticated technology and ensure we provide the latest advancements to developers and businesses. These improvements justify the pricing tiers we’ve set.

However, the advancements come with risks. Both Midjourney v6 and DALL-E 3 are not immune to data leaks and potential security exploits. The more complex the model, the more vulnerable it is to malicious activities. Robust security frameworks must be in place to protect sensitive data and intellectual properties.

That brings me to another point: when models are rushed to market without thoroughly overcoming these mathematical challenges, we not only see diminished returns in performance but also expose the underlying data structures to erroneous interpretations, potentially misleading users.

Speed to market is a balancing act. We’ve thoroughly tested our APIs and constantly update them based on feedback. The iterative nature of our tech allows us to refine these models continuously. The demand for innovation drives a fast-paced environment, where updates roll out as swiftly as possible.

Continuous updates are necessary, but they have to be paired with equally prompt security assessments. Introducing new features without evaluating their security implications could lead to breaches. Both Midjourney and DALL-E must maintain vigilance against emerging threats.

“`