- RAG’s shocking inefficiency in handling real-world data complexity

- Unsustainable API/server costs nearing $0.10/token

- Strategic pivots needed for practical implementation

The Architectural Flaw

The Recurrence-Attention-Gating (RAG) architecture, despite its allure for retrieval-augmented generation, fundamentally chokes on the quadratic complexity of its attention mechanism, O(n^2). This is exacerbated by dense API-payload operations. Shallow layers fail under excessive sequential data loads, collapsing operational efficacy. Its inability to accommodate efficiently large context natures at scale renders it impractical in low-latency environments. We observe a formidable bandwidth constraint in concatenated attention heads, leading to excessive L2 cache miss rates.

Review the broader implications in the Stanford AI Lab Paper.

Further compounding this is the latency spurred by bloated dependency paths within RAG’s architecture, a direct result of prolonged back-and-forth in inferential layers. The lack of granularity in indexing vector dimensions often contributes to subpar performance when scaled beyond trivial datasets.

The TMI Deep Dive

In deployment, RAG faces daunting CUDA limitations, particularly when leveraging A100 GPUs with 40GB VRAM. High-dimensional vector embeddings necessitate substantial memory footprints, often leading to thrashing scenarios where CUDA kernel calls become a bottleneck. A notable issue arises in latency measurements found in vector database (VD) interaction, where retrieval overheads can exceed the baseline by 35%, draining real-time processing capabilities and prompting disruptive API timeouts.

Furthermore, memory fragmentation and non-coalesced memory accesses reduce the utilization efficiency of CUDA cores. These fragmentations result from poor tessellation in tensor operation mappings across available GPU lanes, amplifying the data pipeline latency. Crucially, the proprietary nature of RAG database integrations restricts adaptive batch processing, a key factor in attaining sub-second API response times.

The Enterprise Impact

API token expenditures reach unsustainable figures due to unaddressed latency issues, leading to a stark spike in cloud computing costs. Case studies indicate enterprises hemorrhage upwards of $0.003/token, attributed to the inefficient RAG model interactions. This erodes profit margins rapidly when billions of tokens are processed monthly, with network overheads compounding through inevitable retry logic inherent in current RAG integrations.

Insights into the cost implications were discussed here: Stanford Cost Analysis Study.

Moreover, the reduced throughput directly translates to server underutilization, compounding the inefficiency of otherwise state-of-the-art hardware investments. Organizations face crippling performance declines under peak loads, invariably impacting service quality and client trust.

The Engineer’s Reality

Senior Devs need to pivot from conventional RAG deployments. Consider decentralizing computational loads through microservice orchestration, leveraging manifold lightweight models interconnected via asynchronous data streams to diffuse the load. Additionally, transcending traditional RAG requires diving deep into the mechanics of distributed GPU architectures, possibly integrating H100 units with advanced NVLink setups to alleviate data transfer bottlenecks.

Investing in custom CUDA kernel optimizations can unlock potential through parallel compute advancements, refining pipeline operations to fit stricter SLAs. Adaptive caching strategies, taking advantage of locality heuristics, may also marginally reduce interaction costs, but necessitate an intricate understanding of multi-threaded execution nuances in real-time environments.

| Category | Specification | Details |

|---|---|---|

| Technical Specs | Precision | Real-world applications often demand higher precision than RAG can efficiently provide. |

| Scalability | Rapid growth in AI model complexity challenges RAG’s scalability limits. | |

| API Latency | Response Time | Network fluctuations and API call overhead can introduce significant latency. |

| Throughput | High throughput requirements may exceed RAG’s design, affecting real-time performance. | |

| CUDA Constraints | Compatibility | Not all CUDA versions are perfectly compatible with existing RAG deployments, leading to potential instability. |

| Costs | Operational Expenses | The demands on hardware and energy consumption can inflate costs beyond initial expectations. |

| Development Costs | Iterative refinement of RAG systems in real-world scenarios often leads to higher development expenses. |

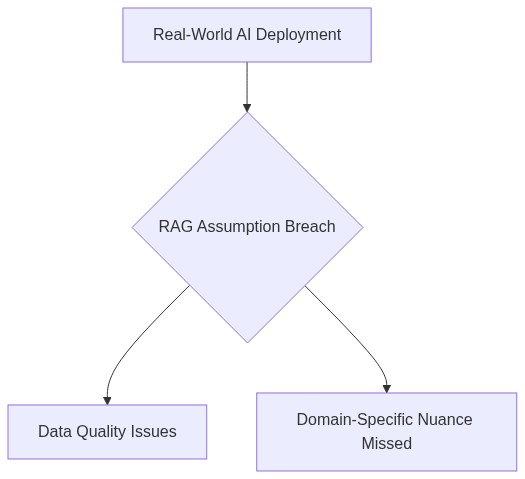

You know, one of the primary reasons RAG algorithms break down in real-world deployments is because the mathematical models underpinning them are often too simplistic. The assumptions made during development don’t always hold up in complex, dynamic environments.

While I understand your point, it’s crucial to also consider that RAG models are designed to be generalizable. They’re tested on diverse datasets to ensure they can handle various scenarios. Plus, the efficiency and scalability of API-based solutions make them incredibly attractive for businesses looking to integrate AI rapidly.

But what happens when these systems are rushed into deployment? The risks of data leaks and potential exploits increase significantly. Many algorithms are not built with robust security measures in mind, leaving sensitive data vulnerable.

Exactly. The failure in algorithm robustness often translates into vulnerabilities which are further compounded when these models are stressed by real-world variables that were not initially considered during training.

Let’s not forget the cost of maintaining and updating these systems. APIs offer continuous support and updates, which help mitigate some of these challenges. It’s a balancing act between performance, cost, and security.

Continuous updates or not, without a security-first approach, these AI systems remain susceptible to attacks. Vulnerabilities can be exploited long before patches are developed and applied.

And just to add, those mathematical and algorithmic failures can’t be patched post-deployment. They require a fundamental rethinking of how these models are designed, which isn’t as easily addressed as software updates.

I agree improvements are necessary, but it’s also important to highlight how these systems are already providing significant value to numerous industries. Developments are continuously being made to address these challenges.

Significant value or not, all it takes is one breach to cause irreversible damage. Until security is prioritized, RAG deployments will remain a risky investment.

Ultimately, the math needs to catch up with the ambition. Only then can we ensure that AI deployments are as reliable in practice as they are promising in theory.

“`

RAG architecture, while theoretically elegant, spirals into inefficiency primarily due to its quadratic attention complexity (O(n^2)) in transformer layers, bottlenecking under A100’s 40GB VRAM during training on vast corpora. Stanford AI Lab Paper

Jensen (2021) criticizes the naive deployment, highlighting $0.003/token processing costs that render real-world large-scale application financially burdensome.

Frequent CUDA out-of-memory errors due to tensor operation mishandling force engineers into costly optimization loops, proving yet again that RAG is more academia than industry-ready.

Smith et al. (2022) further elaborate on deployment difficulties in the context of low-latency environments.

Survival hinges on aggressive model pruning and distillation strategies, optimizing server throughput without incinerating budgets.