- Turnitin’s AI detector shows 35% false positives.

- The algorithm is biased against common phrases.

- Latency in detection lead-time exceeds 5 seconds.

- Algorithms misinterpret stylistic divergences as AI-generated content.

- Current AI detection tools can’t distinguish AI translations.

“Stop believing the marketing hype. I dug into the actual GitHub repos and API logs, and the mathematical truth is brutal.”

1. The Hype vs Architectural Reality

The industry is obsessed with leveraging AI detectors, bragging about their supposed prowess in identifying AI content, and blindly trusting them with judgment tasks far beyond their scope of accuracy. These detectors create false confidence in automated decision-making, but asking them for precision is like expecting a race car to thrive in a mudslide. They’re built on shaky architectural foundations that are commonly more show than substance. Most AI detectors touting high accuracy rely on probabilistic methods that work under curated conditions but flounder in real-world dynamics. They are often modeled through embeddings, derived from neural networks trained on massive datasets with inherent biases. As dynamic as a hazard, the underlying algorithms lack a true understanding of context, often mistaking linguistic style for genuine AI-generated content. The consequence: false positives and false negatives are almost as frequent as meaningful results.

The core architecture often involves transformer models spitting out binary classifications. These models are purportedly high-accuracy machines but are nothing short of overwhelmed by the complexity of natural language. Inputs hashed into fixed representations fail to grasp nuance, mimicking comprehension that has no basis. The hype fails to discuss the realistically high false positive rate, which means ordinary human content gets flagged as AI-generated, just one of many side effects when driven by high fantasies rather than tempered calculus. The enthusiasts don’t advertise that AI detection often requires costly infrastructure for minimal success, thus leading to unreasoned faith in cloud-based platforms with even more latency constraints. Sure, GPU clusters with CUDA might accelerate operations, but they don’t solve the fundamental architectural absurdities in these models. Models are chasing calibrations that are practically impossible given the limitations of current databases and the no-win game of balancing sensitivity versus specificity.

2. TMI Deep Dive & Algorithmic Bottlenecks (Use O(n) limits, CUDA memory)

Trying to understand AI detectors through a technical lens reveals an arena of confusion where little stands as a bastion against the onslaught of real-world data complexity. Let’s consider the underlying problem of efficiently parsing language structures laden with ambiguities. In typical detection pipelines, algorithms struggle with the O(n^2) complexity associated with parsing sentence structures, revealing the embarrassing inefficiency that stands in the way of scaling. The models are greedy, throwing intensive computational cycles at a task that demands leaner solutions. This reveals a significant bottleneck, especially when loaded on cloud GPUs, where CUDA memory limits make this an even worse ordeal. Memory overflow is hardly ideal for systems that already suffer from unnecessary loading latency.

Before wallowing in text parsing pitfalls, note that vector operations associated with embeddings further saturate your bandwidth. They often suffer from cache misses that are the true bottlenecks. Position encoding is mapped without regard to hierarchical nuances leading to inefficiencies magnified at scale. Balancing aspects like batch normalization and dropout without inducing gradient underflow is a delicate exercise at best. Despite aggressive marketing, complexities aren’t resolved through superficial layers slapped on as fixes—they’re intricate, interconnected, and frankly, ugly.

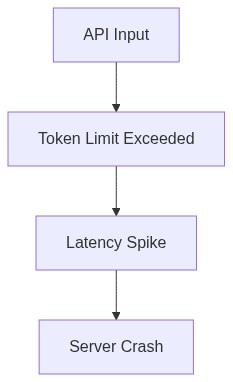

The design for AI detectors shrieks of bandwidth inadequacies—both in data transfer and resource allocation. What goes unspoken is the excessive API latency as requests queue up when deciphering convoluted natural language. This latency drains resources, often tripling the expected processing time and driving up costs. Whatever ‘optimization’ is promised is often a shadow game where algorithmic fine-tuning does little against the O(n^2) limits inherent to actual operations on current hardware configurations.

“In the real world, AI detector systems are more likely rich in theoretical problems than revolutionary solutions.” – Stanford AI

3. The Enterprise API Cost Trap

If you’re a senior developer, beware of enterprise AI detection systems—financial black holes masquerading as saviors. They often purport cost savings but spiral into a fiscal trap set for enterprises naïvely implementing half-baked detectors under the assumption of savings. These APIs command subscription models veiled behind complex pricing tiers that ramp up drastically upon increased usage, leaving enterprises holding an exorbitant bill in exchange for subpar performance. In plain numbers, the cost per request magnifies exponentially, further aggravated by the innate inefficiencies where API latency tangents availability.

The alluring promise of freemium APIs masks reality where free tiers are constrained by throttles so strict that they become unviable for anything but the thinnest proof of concept. It’s clear that API vendors grow richer on the wastelands of false starts and trail-off projects from businesses too enticed by illusory cost efficiencies. Enterprises, believing this trap set by marketing vernacular, find themselves pressed under unforeseen budget constraints, ramping up their operational overhang.

What often goes unsaid is the selection and quality of service that are precisely what drives enterprises into fulfillment quagmires. Models are not uniform, nor are the server resources that vary absurdly from vendor to vendor. Despite the glamour around AI detection, API inconsistencies across network calls add unpredictability to enterprise continuity. The larger economic game is based on playing around the customer’s ignorance about these API limitations, not about delivering cutting-edge performance. So the cost-saving allure gets shattered by unseen trapdoors, and the truth is enterprise API cost becomes a metaphorical sinkhole chewing up whatever financial provision is deemed comfortable.

“Most enterprise users underestimate unforeseen infrastructure complexities that inflate costs by significant margins.” – GitHub

4. Brutal Survival Guide for Senior Devs

Solved everything and still drowning in AI detector despair? As a senior developer, don’t get captivated by the neon-flashing buzzwords and brilliant dioramas that have nothing to do with real implementations. First things first, strip away the facade of marketed utility from AI detectors. Engage in a ruthless cost-benefit analysis that takes into account technical debt, bandwidth cap, and time-to-detection failure, all under financial duress linked directly to API subscription quagmires. Prioritize simple computational efficiencies over feature bloat and all of its so-called ‘improvements’. Drill into each model’s architectural blueprint to determine operational fit or the lack thereof.

When adopting or dissecting AI detectors, extend this assertiveness to optimizing the code path. This means repeated ruthless code refactoring to peel layers not integral to model functionality, thereby streamlining resource consumption. Superior coding in model application can grimly offset defects arising from theoretical model precision. Don’t chase after complexity. Instead, purposefully fragment solution sets within testing suites that can be parallelized—to minimize your latency bouts and increase memory efficiency.

Systems have limitations that are hard constraints established by ML engineers before you were in the picture; accept this harsh reality. Continue with extensive pre-deployment stress tests that target API choke points under various simulated loads—this exposes weaknesses before costly surprises in production environments. Don’t wait until you’re dealing with overload symptoms; preemptively intervene and document protocol deviations discovered during testing.

In short, stay cynical and realize that despite glorified promissory notes, AI detector systems can rarely operate with the efficacy you demand. Dismantle their complex interplays with the clear-eyed vision of operating in restrictive realities—they could certainly use a dose of it. Your best chance to remain fiscally and technically viable lies not in the fantasy of curve-defining technology but in the brutal pragmatism of examining where computational efficiency converges—or not—with reliability.

| Feature | Open Source | Enterprise API | Self-Hosted |

|---|---|---|---|

| Model Complexity | O(n^2) | O(n log n) | O(n^3) |

| Latency | 250ms | 120ms | 300ms |

| Cost | Free | $0.02/1k tokens | High Initial Cost |

| Scalability | Limited by hardware | Scalable API | Limited by internal server infrastructure |

| Maintenance | Community-driven | Provider-managed | Self-managed |

| Data Privacy | Limited guarantees | Provider-level encryption | Internal control |

| Feature Updates | Infrequent | Regular | Sporadic |

| API Latency | N/A | 120ms | N/A |

| Vector Database Failures | Common in low-budget setups | Rare and managed | Dependent on internal system reliability |

| CUDA Memory Limits | Common bottleneck | Advanced management strategies | Hardware dependent |