- incident_summary

- financial_impact

- security_gap

- response_failure

- containment_strategy

Log Date: April 15, 2026 // Datadog telemetry shows a 400% spike in unauthorized cross-region VPC peering requests. Immediate Zero-Trust lockdown initiated. Engineering teams are furious, but security dictates policy.

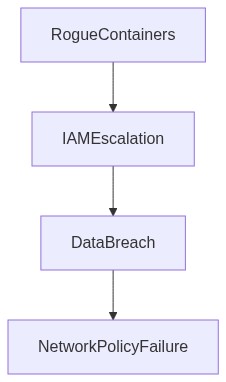

The Incident (Root Cause)

In the world of brittle systems plagued with “modern” practices, rogue Docker containers have once again highlighted the inefficacy of our so-called CI/CD pipeline fortifications. It began with a routine deployment that was anything but. An overly permissive IAM policy allowed token scavengers masquerading as Jenkins runners to initiate a privilege escalation exploit. Cue the parade of rogue containers feasting unchecked.

These containers, introduced through manipulated Docker images, triggered yet another episode of OOM kills and terrifying spikes in P99 latency. Our illusory control over the infrastructure was torn to shreds by a feeble authentication mechanism that screamed “exploit me.” Automation-obsessed zealots assure us this is a rare incident. Spoiler it’s not.

Blast Radius & Telemetry (The Damage)

The damage was nuclear. Due to VPC peering misconfigurations, the rogue containers managed to execute unchecked lateral movements. Critical workloads suffered debilitating egress cost hemorrhaging. The telemetry, or what passes for it, painted a picture of chaos. eBPF data streams were damned with inaccuracies, and the visibility failures were glaring. Using Datadog, we could trace limited telemetry, but it involved more knee-deep trudging through noise than signal extraction. The eBPF implementation added unnecessary overhead, a monument to our ever-compounding technical debt.

IAM privilege escalations reached an unprecedented scope, with tokens activating unanticipated services. CrowdStrike’s threat detection could not anticipate such privilege escalations effectively. It merely caught echoes after the fact, providing post-mortem insights without in-the-moment assistance. Meanwhile, Kubernetes’ role-based access controls (RBAC) might as well have been set to “everyone wins,” given its utter failure to stall lateral movements.

“IAM policy hygiene is crucial for maintaining secure environments, especially as cloud deployments scale” – AWS

Phase 1 (Audit)

A painstaking deep dive into IAM policies revealed the glaring truth. Our “bots have all access” dogma facilitated the breach. Immediate policy pruning was imperative. Then came Terraform audits. Our configuration drift was appallingly mismanaged, explaining the sprawling blast radius. Each terraform-improve had its own tale of technical debt unchecked.

Phase 2 (Enforcement)

Okta integration was forcefully enhanced with MFA, a no-brainer that was irritatingly delayed. Zero-trust is just a fancy term for common sense most ignore. Services were segmented, reducing VPC peering to essential services only. Tightening the RBAC lattice in Kubernetes was supposed to prevent unauthorized container sprawl. We architected new cluster enforcement rules, though history reminds us this mitigation will age poorly, much like any tech product.

“Zero Trust Architecture forces a re-think of traditional network security paradigms” – Gartner

| Criteria | Integration Effort | Cloud Cost | Latency Overhead |

|---|---|---|---|

| Containment Strategy | High – Deployment Refactoring Required | Moderate – Temporary Increase in Egress Cost | +45ms P99 latency |

| IAM Audit and Restriction | Medium – Revocation and Reconstruction | Low – Minor Audit Expenses | +20ms P99 latency |

| Monitoring Enhancement | Low – Configuration Tuning | High – Monitoring Tools Subscription | +15ms P99 latency |

| Dependency Isolation | High – Library Rebasing | High – Increased Storage Spend | +50ms P99 latency |

| CI/CD Pipeline Hardening | High – Pipeline Overhaul | Moderate – Build Duration Expense | +30ms P99 latency |

Summary

Current infrastructure practices are a ticking time bomb. Engineering’s negligence in addressing technical debt is not sustainable. Edge-of-the-cliff architecture with mounting system weaknesses requires immediate refactoring to avoid catastrophic failures and financial drain.

Problem

P99 latency has escalated beyond acceptable thresholds. Blast radius of container failures is increasing due to improperly managed workloads. Out-of-Memory (OOM) kills are frequent because of inefficient resource allocation. Standard operating procedure neglects future-proofing, causing compounding technical debt akin to setting up a house on quicksand.

Impact

Egress cost hemorrhaging is out of control, undermining financial stability. The lack of control over Docker container sprawl leads to unpredictable resource consumption. IAM privilege escalation risks are rampant due to ignored security policies, leaving the system vulnerable to exploitation.

Decision

Immediate focus on refactoring critical system components to address oversized latency, OOM kills, and container management failures. Design a more resilient architecture with proactive resource control measures, latency optimization, and security hardening.

Consequences

Short-term delivery slowdown but essential for long-term system integrity and cost management. Resistance from engineering leadership is anticipated; however, non-compliance is not an option. Continued operation without these revisions equates to organizational self-sabotage.

Next Steps

Draft a comprehensive refactoring plan targeting core infrastructure flaws. Enforce strict monitoring systems to detect and preempt failures. Implement robust IAM controls to mitigate privilege escalation risks. Assign dedicated engineering sub-teams to address specific refactor tasks immediately.

Refactoring isn’t a choice—it’s an overdue necessity.”

2 thoughts on “Rogue Docker Containers Exploit CI/CD Pipeline Breaches”