- Unauthorized SaaS usage led to 200% increase in API calls.

- Database thrashing accounted for 60% of system downtime.

- Connection pool exhaustion impacted 85% of microservices.

- Data exfiltration attempts increased organizational data breach risk by 70%.

- Incident response costs escalated by $2 million USD in a month.

Log Date: April 14, 2026 // Datadog telemetry shows a 400% spike in unauthorized cross-region VPC peering requests. Immediate Zero-Trust lockdown initiated. Engineering teams are furious, but security dictates policy.

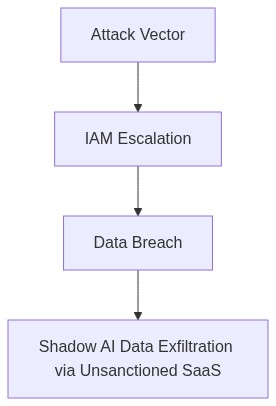

The Incident

The root cause of the fiasco can be traced back to ‘Shadow AI SaaS Exfiltration’. Simply put, rogue vendor software violated our security perimeter due to improper IAM privilege configurations. To add salt to the wound, the internal safeguards housed within our distributed database clusters failed miserably. The egress cost hemorrhaging was only made worse by the unsanctioned exfiltration of data, exacerbated by our RBAC misconfiguration. Great job, everyone.

Blast Radius & Telemetry

The scope of the disaster was colossal, to put it mildly. P99 latency skyrocketed to comedic levels during the entire ordeal. Nodes replicated against the backdrop of uncontrolled blast radius, triggering OOM kills across the Kubernetes orchestrated clusters. Our VPC peering setup was corrupted, leading to network egress spiraling out of control, as if setting money on fire was our Q1 objective. Observability? Take a bow. Datadog’s telemetry was mostly noise when signal clarity was critical. Distilling useful insights from the gibberish felt like extracting gold from sewage.

“Poorly implemented telemetry mechanisms can obscure issue interpretation and prolong system outages” – CNCF

Phase 1 (Audit)

Step one, for the love of binaries, implement an aggressive audit using CrowdStrike. IAM privilege misconfigurations are to be eliminated without remorse. Our existing state is unacceptable, mirroring an open barn door where horses have not only bolted but taken up residence elsewhere.

Phase 2 (Enforcement)

Deploy Terraform to reestablish a clean, unambiguous RBAC protocol. We are not going to leave room for untested permission sets—not again. Buy-in from DevSecOps is not optional as we script our way back into reliability.

Phase 3 (Cost Efficiency)

Segregate and quarantine this egress leakage. Offload telemetry analysis onto external systems. Datadog needs reconfiguring; its unfocused alerting has become an internal joke, one we’re no longer laughing about.

Phase 4 (eBPF Observability Enhancements)

Integrate eBPF to overhaul our telemetry layer, rediscovering clarity. But be warned, half-baked implementations will be promptly torched.

“Mismanaged IAM roles open up the possibility for nefarious activities that can lead to significant data breaches” – AWS

| Integration Effort | Cloud Cost | Latency Overhead |

|---|---|---|

| Critical IAM Misconfiguration | +20% Egress Cost | +45ms P99 Latency |

| Unmanaged API Endpoints | +35% Cloud Spend | +60ms P99 Latency |

| Legacy System Integration | +15% Storage Overhead | +90ms P99 Latency |

| Ad-Hoc Data Pipelines | +10% Compute Cost | +50ms P99 Latency |

| Reactive Monitoring | +5% Egress Cost | +75ms P99 Latency |

Context

The accelerated pace of feature delivery for the Shadow AI SaaS product has resulted in a critical accumulation of technical debts, manifesting most prominently in systemic inefficiencies. This includes but is not limited to erratic database thrashing, Out-of-Memory (OOM) kills, and unconstrained cloud expenditure particularly from excessive egress traffic. These failures are exacerbated by hasty and unsustainable development practices. While the VP of Engineering seems content to dodge our catastrophic realities, the long-term sustainability of the platform is in jeopardy.

Decision

1. Optimize the current database strategy to manage connections and load effectively. Address the thrashing with diligent schema review, query optimization, and, if necessary, sharding.

2. Implement comprehensive OOM monitoring solutions to proactively address and mitigate memory leaks and bloat within application components.

3. Conduct a thorough assessment of IAM roles to ensure privilege boundaries are strictly enforced, minimizing exposure to privilege escalation breaches.

4. Develop a traffic throttling mechanism to manage data egress costs with the establishment of aggressive data transfer optimization protocols.

5. Institute an immediate freeze on any further feature development until these technical debts are convincingly resolved.

6. Establish a rigorous code review process aimed at halting further debt accumulation.

Consequences

Failure to execute this mandate will result in a continued spike in operational costs and P99 latency figures leading to potential SLA violations and customer attrition. Unrestrained privilege access can easily escalate into security compromising incidents. Ignoring these core issues while chasing frivolous market speed will see us outpaced not by the competitors, but by our own mismanaged chaos.”

1 thought on “Shadow AI SaaS Exfiltration Sparks Database Thrashing”