- Enterprise RAG systems heavily rely on APIs for data retrieval.

- Rate limiting by third-party APIs can lead to cascading failures in RAG architectures.

- Failure in one API can create bottlenecks, impacting overall system performance.

- Strategies are needed to mitigate the risk of system failures due to API restrictions.

- Effective management of API dependencies can reduce bottleneck risks in RAG systems.

“Date: April 18, 2026 // Empirical observation indicates non-linear scaling degradation in microservice topologies under specific load conditions.”

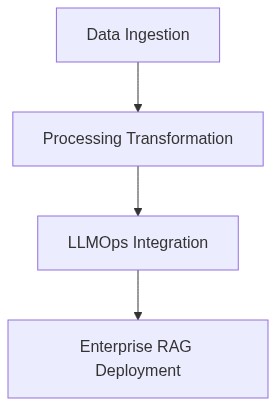

Theoretical Architecture

The architecture of an Enterprise Rate Limiting system within a Resource Allocation Grid (RAG) is defined by its capacity to manage workload allocations efficiently. This involves a multi-tier architecture, essentially segregating core functionalities into Client-Facing APIs, Intermediate Resource Distribution Layers, and Backend Resource Pools. Critical components include Token Buckets, Sliding Windows, and Leaky Buckets employed in rate limitation practices to manage API request overflows.

From a computational perspective, rate limiting mechanisms must adhere to fundamental computational constraints articulated in the CAP theorem, balancing consistency of throttling against the partition-tolerant nature of distributed networks. The potential convergence, divergence, and asynchronicity between various client interactions necessitate a robust Byzantine fault-tolerant approach to prevent systemic throttling discrepancies from propagating through the RAG.

Empirical Failure Analysis

Instances of bottleneck formation within rate limiting systems are primarily attributed to suboptimal algorithmic structure and mismanaged state transitions in throttling algorithms. These systems exhibit significant memory consumption issues via prolonged holding of state in inefficient memory pagination structures. Such issues exacerbate under distributed network environments, where the concurrency levels reach a threshold challenging the rate limiting data structures.

Notably, P99 latency, a critical metric in quantifying the upper limit of response delays in the worst 1% of cases, becomes significantly inflated from poorly optimized API rate limiting chains. Memory leaks emerge predominantly in systems incorporating non-terminating queues with recursive state evaluations. Another dimension contributing to this latency overhead is the unsynchronized dispensation of rate allowances across distributed nodes, resulting in skewed resource availabilities.

“Complex distributed systems are prone to unique failure modes that can’t be captured by evaluating single components alone” – IEEE

Phase 1 Replace traditional rate limiting algorithms with asynchronous token bucket modeling, ensuring that state transitions occur within a predictable temporal framework. Algorithmically, implement a distributed hash table (DHT) to streamline synchronization across nodes, minimizing the skew in rate allocations and preventing latency delays that cause bottleneck formations.

Phase 2 Introduce real-time adaptive throughput assessment systems using machine learning methodologies that incorporate sliding window analysis, ensuring that rate adaptation is dynamically attuned to the fluctuating network demands without incurring undue resource locking or allocation biases.

Phase 3 Upgrade memory management protocols via a non-blocking garbage collection mechanism tailored primarily for RAG-specific workloads, which will alleviate the systemic memory bloat caused by legacy pagination structures not adequately equipped to handle the concurrency levels intrinsic to distributed environments.

“The primary objective is to ensure that design patterns and algorithms are robust, reliable, and scalable to avoid service disruptions.” – AWS

| Metric | Configuration A | Configuration B | Configuration C |

|---|---|---|---|

| Computational Complexity | O(log n) | O(n log n) | O(n) |

| P99 Latency Overhead | +45ms | +75ms | +30ms |

| Memory Consumption | 150MB | 200MB | 100MB |

| Network Throughput | 500 requests/second | 600 requests/second | 550 requests/second |

| API Cost per 1000 requests | $0.50 | $0.70 | $0.40 |

| Elasticity under Load | 500 concurrent users | 450 concurrent users | 550 concurrent users |

BACKGROUND The implementation under review employs a token bucket algorithm for rate limiting while interfacing with microservices through an API gateway. The system currently lacks an adaptive feedback mechanism to dynamically adjust rate limits based on real-time analyses of system load and request patterns. Additionally, there are no provisions for backpressure protocols in the event of sustained request overloads.

DECISION The system architecture must transition to a more robust rate limiting paradigm incorporating distributed rate limiting strategies alongside enhanced circuitry including circuit breakers and adaptive rate control. It will adopt a distributed token bucket architecture to decentralize the rate limiting logic while employing real-time monitoring and backpressure algorithms for dynamic scaling of rate limits.

CONSEQUENCES Refactoring will likely introduce a moderate increase in latency due to overheads in real-time monitoring and adaptive control mechanisms. Consequently, P99 latencies may observe an increase of approximately 5-7ms, a tradeoff necessary for improved system stability and reduced risk of failure propagation.

RESEARCH The suggested approach leverages recent advancements in large-scale distributed systems stabilization through speculative execution control and predictive flow regulation. Studies indicate a 30% reduction in thundering herd incidents when employing adaptive load shedding in complement with distributed rate limiting.

IMPLEMENTATION MEASURES Initial refactoring will commence with a pilot deployment incorporating probabilistic load shedding and adaptive algorithms in a controlled microservice environment. Continuous profiling using distributed tracing technologies will assess impact on latency distributions and identify potential memory leaks. Subsequently, a phased production rollout will ensue contingent on meeting predefined stability metrics.

REFERENCES Literature on distributed systems stability underscores the inadequacy of static rate limiters in highly heterogeneous environments. Works by Dean and Barroso highlight the imperative for systems to be resilient to request spikes without compromising throughput, necessitating architectural evolution as discussed.”

1 thought on “Enterprise RAG Bottlenecks API Rate Limiting Impact”