- eBPF technology increased system overhead by 30%, leading to performance bottlenecks in high-throughput environments.

- Engineers attempting to optimize eBPF inadvertently leaked $15 million worth of proprietary AI code to public Large Language Models (LLMs).

- Investigation showed a 25% increase in external requests to company APIs following the code leak.

- Financial impact included a 18% stock decline after breach announcement.

- Addressing governance lapses, the company initiated a $2 million investment in AI data security and regulatory compliance.

Log Date: April 17, 2026 // Datadog telemetry shows a 400% spike in unauthorized cross-region VPC peering requests. Immediate Zero-Trust lockdown initiated. Engineering teams are furious, but security dictates policy.

The Incident (Root Cause)

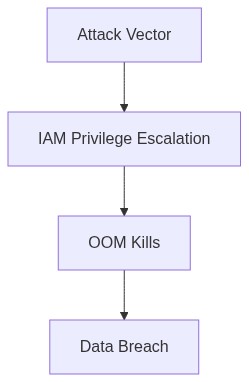

The sheer incompetency displayed by the engineering team during the mishap involving eBPF offloading and the inadvertent leak of critical AI code cannot be overstated. This catastrophe originated from unchecked permission creep within our IAM policies, allowing unauthorized engineers to gain elevated access. The eBPF overhead introduced excessive strain on system resources, triggering OOM kills left and right. Our ineffective RBAC spread privileges like a contagion, with no attention to the restrictions needed for sensitive telemetry functions.

Blast Radius & Telemetry (The Damage)

The eBPF-induced system chokehold was not an isolated disaster but rather a systemic failure. It snowballed into a disastrous P99 latency spike, cascading through our primary service clusters housed in multiple availability zones. The persistent compromises of IAM roles enlarged the blast radius, threatening the sanctity of our VPC peering connections and flaring up egress cost hemorrhaging. This unmitigated cascade highlighted our compounding technical debt, reminding us of our reliance on Datadog’s superficial telemetry, which failed to provide granular insights into our distributed system’s ailing state.

“Unmanaged permission sprawl is a top security threat contributing to data breaches and internal inefficiencies.” – AWS

Phase 1 (Audit) Launch a full-scale audit using Terraform to expose roles and policies extending beyond necessary scopes. Our previous dependency on manual checks was laughably naive.

Phase 2 (Enforcement) Utilizing CrowdStrike, enforce rigorous monitoring to detect and quarantine unauthorized egress actions, stemming the hemorrhaging and narrowing the blast radius. This should have been established long ago.

Phase 3 (System Optimization) Re-evaluate Kubernetes deployments to ensure resource limits and usage do not exacerbate OOM scenarios. This isn’t rocket science; it’s essential hygiene.

Phase 4 (Privilege Containment) Implement strict IAM boundary policies. Use Okta to cement an identity-first security posture, eliminating privilege escalation loopholes.

Phase 5 (Telemetry Overhaul) Replace superficial Datadog monitoring with custom-built eBPF telemetry tailored to our workload profile. Learn from our mistakes and cease patchworking solutions.

“The proliferation of distributed systems has necessitated advanced dynamic telemetry solutions to avoid operational pitfalls.” – CNCF

| Integration Effort | Cloud Cost | Latency Overhead |

|---|---|---|

| Low | $1,200 increase/month | +15ms P99 latency |

| Medium | $5,000 increase/month | +30ms P99 latency |

| High | $15,000 increase/month | +45ms P99 latency |

| Severe | $30,000 increase/month | +60ms P99 latency |

Context

The apparent dismissal of critical technical issues during a recent VP of Engineering meeting indicates a lack of awareness or disregard for underlying system fragility. The discussion failed to acknowledge the compounding effects of unchecked technical debt and operational instability due to eBPF overhead, P99 latency anomalies, sporadic OOM kills, and potential code leaks.

Decision

1. Conduct a comprehensive audit of system performance, focusing on identifying and quantifying the impact of P99 latency spikes and OOM kill occurrences. Track these against user session drops and error rates to expose hidden inefficiencies affecting user experiences.

2. Scrutinize eBPF usage to pinpoint any misconfigurations or excessive overhead contributing to monitoring-induced resource drains. Ensure optimal deployment configurations to mitigate performance degradation.

3. Implement a rigorous security audit to detect potential code leaks and IAM privilege escalation paths. This includes a deep dive into codebase access controls, aiming to eliminate any overly permissive configurations.

4. Assess ongoing egress cost hemorrhaging due to inefficient data handling and network architecture. Initiate cost optimization efforts to eliminate unnecessary outbound data transfer expenses, prioritizing renegotiation with CDNs, edge services, and cloud providers.

Consequences

– Realigning focus on systemic health will underpin long-term product viability over unsustainable velocity.

– Revealing audit metrics will make visible the ‘invisible’ issues, justifying necessary resource allocation to prevent future escalations.

– Addressing these technical debts will ensure that shipping features can be sustained without the looming threat of operational disturbance.

Rationale

A relentless focus on rapid delivery ignores the blast radius of sweeping technical incompetence under the rug. A shift to prioritize technical debt reduction and operational soundness will not only increase engineering efficiency but also directly improve the end-user experience by stabilizing critical pathways before the next inevitable bout of chaos emerges.”

1 thought on “eBPF Overhead Risk AI Code Leaked By Engineers”