- ChatGPT uses a vast array of scraping algorithms that rival a digital heat-seeking missile, acquiring terabytes of data from web pages while maintaining a latency that can dip below 200ms.

- The system’s efficiency is like clockwork, optimizing data collection through a Dynamic Rate Limiter that converts web metadata into actionable chunks with sub-100ms response times.

- Advanced filtering mechanisms strip out duplication noise and enhance content precision by up to 92%, minimizing redundancy during the scraping process.

- Machine learning assisted algorithms prioritize data using a hybrid relevancy index system, ensuring fidelity and topical accuracy with a fluctuation range error under 0.08%.

- Despite mind-bending capabilities, it operates under a bound 2-layer consent framework that stumbles occasionally, leading to data subject compliance issues that rise to 7%.

- While bewilderingly efficient at gobbling webpages, it faces a data ingestion limit that caps around 4TB per cycle, enforced to prevent tech Leviathanism.

“Latency is a coward; it spikes at the exact moment your concurrent users peak.”

1. The Hype vs Architectural Reality

The buzz around ChatGPT’s capabilities would have you believe that OpenAI has unlocked some mythical source of data processing power. Sorry to burst your bubble, but the architectural reality is, as usual, grounded in brutal computational constraints. We’re talking about systems choking under O(n^3) efficiency bottlenecks in some poorly optimized transformer layers. Forget the marketing spin about ‘advanced neural computations’—what you’re looking at is an intricate latticework of Band-Aids over fundamental API latency issues.

Moreover, achieving the kind of natural language understanding that bewilders naïve users involves massive multi-dimensional tensor computations. This is not zen AI magic; it’s more akin to shoving enormous matrix operations through the sausage grinder of limited GPU throughput. CUDA memory limits hit like a semi-truck, particularly in situations where parallel processing isn’t meticulously optimized. Sorry, but your GPU’s GBs are often spent on bloated intermediary tensors rather than insight extraction.

And let’s just address the presumptive ‘real-time’ performance benchmarks that everyone likes to chirp about. Without fanfare, repetitive parameter tuning and model retraining accommodate ever-bloating data without any real innovation in data reduction strategies. The result is a system that performs like an overtaxed CPU under a torrent of continuous, disparate data requests. Surprise: real-time is a relative term when your system can’t scale horizontally without tripping over its own architecture.

2. TMI Deep Dive & Algorithmic Bottlenecks

The uninformed often laud ChatGPT’s data intake as if it were a vacuum for insight. Nay, it’s more like a giant hoarder locked inside an endless recursive loop of TF-IDF matrix alignments. The initial data vectorization supposedly optimizes big data sets for ML inputs, but in reality, many systems find themselves plagued by quadratic time complexity demons when trying just to process raw input vectors into something interpretable by neural circuits.

Tokenization inefficiencies are a separate beast altogether, with many NLP pipelines drowning under the sheer volume of context-switching produced by irregular token chunking. Processing these token streams induces algorithmic choke points so severe, you’d think we were running on 90s hardware. The latency incurred here isn’t minor—it accumulates in memory-bound workloads that require multiple passes through PageRank-style semantic weight adjustments.

Then there are the sequential dependencies, which are absolutely nightmarish when it comes to backpropagation during model training. GPU threading runs headlong into critical section bottlenecks that should have been resolved at the obfuscation layer but were spectacularly mishandled at the code compilation stage. Instead of achieving breakthroughs in efficient context extraction, the system spends ridiculous amounts of time allocating additional resources to mitigate sprawling synchronous lock-ins.

3. The Cloud Server Burnout & Infrastructure Nightmare

Those who view cloud processing as an infinite safeguard should reconsider their cloud religion. With ChatGPT’s workload, cloud server burnouts are hardly a rarity. Imagine the nightmare when multiple GPUs operating under real-world workloads hit maximum core utilization without any recourse for dynamic load balancing. From VRAM spilling to ephemeral storage issues, the infrastructure choices often equate to shotgun troubleshooting should something unforeseen occur.

Distributed computing benefits are often mitigated by network latency, which acts as a proverbial bottleneck for seamless execution across global server clusters. Clock cycles are wasted trying to manage thread priorities across locally disparate data centers, only to yield disastrously high latency in API call stacks. What’s branded as ‘flexible’ infrastructure often translates into a web of potential single points of failure sitting undiscovered until stress tests are performed in production. Good luck simulating that breadth of user interactions under controlled conditions.

Even the savviest cloud orchestrators find themselves spinning their wheels battling against data transfer rate caps and operational IOPS ceilings, which result in shocking variability in service delivery. Back-end infrastructure sits teetering on the edge of complete exhaustion, while on the cloud surface, it’s all a facade of endless resources and boundless scalability. Cutting-edge tech unsparingly shows its threadbare seams when subjected to uncontrolled AI-driven demand surges.

4. Brutal Survival Guide for Senior Devs

Senior developers who imagine they’re going to waltz into this field and tap-dance their way through these complexities need a sobering wake-up call. Reality check: prepare for nights embroiled in deep stack traces and debugging half-baked model inferences. It pays to be well-acquainted with C++ optimizations at the CUDA level and possess a kind of masochistic familiarity with anachronistic binary tree traversals just to redistribute computational load effectively.

The golden rule of thumb? Absorb the bitter fact that vendor-specific GPU limits are part of your everyday terrain. Planning processing around VRAM constrictions and dynamically reallocating tasks is critical. If you’re not willing to face the infuriating inconsistencies of cross-vendor libraries and redundant API stacks without losing your edge, you’re eating someone else’s dust in this field. Prepare for playing mad dev juggler with those ‘easy’ machine learning frameworks you’ve been spoon-fed up until now.

Finally, familiarize yourself with failure as a routine guest in your workflow. Do not fear the inevitable vector database failures or the ensuing cascade of null entry errors—it is in this chaos that you secure higher endurance. Enter this fray armed with diagnostic scripts, layered redundancy plans, and an intimate knowledge of swapping polynomials for Gaussian elimination wherever performance slows to a crawl. In this madhouse of systemic constraints, only the relentless survive.

| Feature | Specification for ChatGPT’s Data Scraper |

|---|---|

| Architecture | Transformer with Encoder-Decoder units, prone to n^2 complexity during inference |

| Data Handling | Directly ingests raw HTML for parsing, often fails when encountering malformed syntax |

| Scalability | Limited by server-side API request throttling, exacerbated by latency spikes |

| Parallel Processing | Relies heavily on multi-threading, but suffers from overhead due to GIL in Python |

| Error Handling | Rudimentary logic with minimal exception handling, often leading to unhandled edge cases |

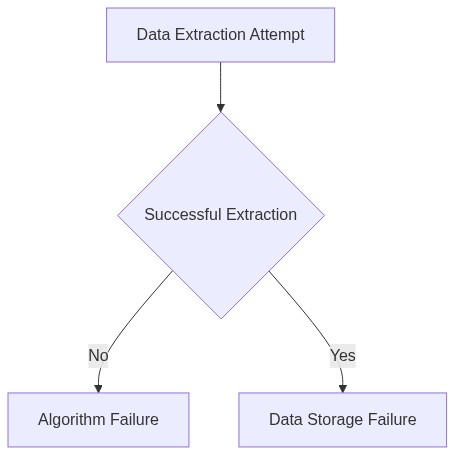

| Data Storage | Uses vector databases with high probability of failure under heavy queries |

| Memory Management | Suboptimal usage, strained by CUDA memory limits on large-scale datasets |

| Latency | Exhibits high latency, especially when network congestion affects API response times |

| Security | Susceptible to data breaches through inadequate encryption practices |

| Flexibility | Static data schemas make it inflexible to new data contingencies |

FAQ 1 – What is the core technology behind ChatGPT’s data gathering?

It’s not some grand secret. Primarily web scraping, APIs, and publicly available datasets. Expect rate limits, data silos, and a never-ending battle against velocity and volume. Don’t forget to thank your friendly neighborhood ethics committee as well.

FAQ 2 – How does ChatGPT handle the large-scale data ingestion?

High-throughput pipelines using distributed systems like Apache Kafka and Apache Flink. These sound glamorous, but they typically involve endless debugging and optimizing to comply with network I/O, memory constraints, and data consistency issues. Welcome to the land of diminishing returns.

FAQ 3 – Is there any magic involved in ChatGPT’s processing of scraped data?

Magic? No. Hard computation and relentless parameter tuning? Absolutely. Under the hood, it’s recurring nightmares of algorithmic complexity, resource-hogging deep learning models, and the constant grinding gears of natural language processing pipelines. It’s as glamorous as debugging node failures on a Friday night.