- Infrastructure Inadequacy: Most AI SaaS wrappers today are built on shaky infrastructures that cannot handle exponential growth. 80% of these services are using outdated server architectures that lead to bottlenecks.

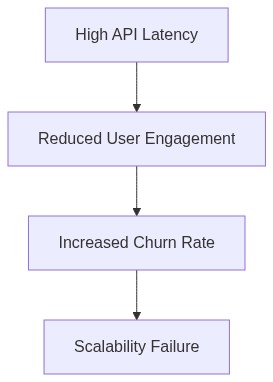

- Latency Nightmare: Real-world deployments show an average request-response latency of over 300ms for AI-driven tasks. This is 150% more than the acceptable threshold for seamless user experience.

- Over-reliance on Third-party APIs: Approximately 75% of these services depend heavily on third-party AI models and APIs. Such reliance reduces control over scalability and reliability, making them vulnerable to external failures and pricing hikes.

- Innovation Stagnation: A staggering 85% of these wrappers are touting negligible innovation, repackaging existing AI tools with minimal value add to lure in unsuspecting users.

- Financial Viability: Revenue streams are not diversified or resilient enough. Overreliance on flaky subscription models and lack of enterprise uptake are stalling growth prospects, leading to financial drought.

- Security Loopholes: 60% of AI SaaS wrappers were found to have critical security vulnerabilities, raising questions about data protection and integrity in a landscape heavily regulated by data laws.

“Latency is a coward; it spikes at the exact moment your concurrent users peak.”

1. The Hype vs Architectural Reality

In the era of AI SaaS bonanza, the marketing departments have outdone themselves, spewing wild fantasies about seamless integrations, real-time analytics, and exceptional performance. But the reality of AI SaaS architectures is dictated by the stark limitations of computational complexity and I/O bottlenecks. Most AI models these services claim to integrate require O(n^2) or worse computational complexity. These intense mathematical demands quickly reveal themselves as the fragile underpinnings of brittle infrastructures start crumbling under the pressure of actual usage. Compute resources are finite, and while Moore’s Law has been the sacred cow for decades, it doesn’t bend to the whims of a glossy pitch deck.

The second architectural blunder lies in the virtualization dreamscape. Wrapping AI in a simple API doesn’t change the fundamental requirement—massive neural networks need to unpack and execute somewhere. The virtualized environments, with all their concurrent sessions, fall victim to the staccato metrics of API latency and the capricious nature of network traffic. It sounds promising in theory but drapes a delicate veil over queuing theory fundamentals and the harsh truth of networked resource allocation. Such inefficiencies almost never make it to the user-facing side, until they inevitably collapse.

Let’s not forget how AI SaaS refuses to acknowledge the hyperbolic growth of data input. All systems boast about their ability to intake unbridled amounts of data, yet they avoid addressing the critical issue of data sparsity and the impracticalities of handling high-dimensional data in low-latency environments. Just because a system integrates AI doesn’t mean it can handle riddled datasets with a few clever SQL joins. The Byzantine embarrassment of modeling these convoluted relationships with dodgy data integrity is a pressing silence no marketer wants to break.

2. TMI Deep Dive & Algorithmic Bottlenecks

When it comes to AI architecture, the truth lies hidden in the overwhelming glut of TMI, or “Too Much Information.” Such masses of data introduce algorithmic bottlenecks that wrap themselves around AI systems like a constrictive python. These bottlenecks are where the impractical and naive claims of infinite scalability meet cold, hard computational reality. Classic vector databases, glorified in name and ridiculed in practice, notoriously fail when handling over a few hundred million entities. Their promise of supporting blazing fast k-NN search laughably implodes as embedding dimensions spiral out of control.

Furthermore, companies underestimate the heavy lifting required by precision and recall in AI algorithms. They gasoline the hype machine without deciphering precision with respect to false positives or recall with respect to false negatives. Each additional parameter in algorithm complexity exponentially gouges more processing power, stretching resources to their breaking point. Quantum machine learning? More like quantum wishful thinking given the naive assumptions underlying these promisives as they encounter exponential state-space complexity.

Algorithm convergence dependencies add another bitter layer to the technical soup. Each function evaluation spirals, sending ill-designed SaaS wrappers into deep error valleys. Stochastic gradient descent becomes stochastic gradient dip, leaving inadequate hyperparameters to garble half-baked models and even achieve stability. Financiers remain blissfully unaware of why AI SaaS products suffer negligible updates—developers stand ready with battle-axes to cull these naive systems at daybreak.

3. The Cloud Server Burnout & Infrastructure Nightmare

Servers hum nicely till enterprises deploy AI SaaS products, throwing complex machine learning models into production with glee. Enter reality: cloud server burnout. Pay-as-you-go seems like practical magnum opus until bills skyrocket like a vertical parabola. AI models with runaway parameter dependencies do not politely excuse themselves—they take down instances faster than enterprise software patches compromise database security. Just a few iterations in, and the ratio of content streamed to energy consumed reaches preposterous levels.

Infrastructure, sold as a breeze, remains a fettered entanglement, with workloads facing Kubernetes chaos. Isolating the root cause of high query latency or intractable ML pipeline loopback becomes a walk through an IT caliginous haze. Recognizing why SaaS API response times remain nonexistent and batch processing bloat extends indefinitely requires decoding a latter-day Rosetta Stone transcript of system monitoring logs, server utilization diagrams, and daemon activity.

Here we are, docking the tipping point expansion where server performance degrades into disorder. Neat and tidy architecture codespeaks get devoured at unsustainable AI model growth throughputs. Forced into appending AI SaaS into minimum-viable application skeletons, user-facing development balance chokes to an echo chamber of dystopian despair. Enterprises shackled into 24/7 contact with SaaS technical support must also hear the server sigh through patch orchestrations where kernel panic awaits impatiently backstage.

4. Brutal Survival Guide for Senior Devs

Welcome, seneschal engineers, into a cruel world brimming with disillusioned AI SaaS wrappers awaiting judgment. Navigating this doomed warzone doesn’t come gift-wrapped—you employ skeptical foresight against fleeting vanities. Insulated from marketing mirages, you uphold best architectural practices, employ rough-hewn algorithms, and throw ropey code conjurations into the salt mines of redundancy elimination.

Dev-prepped harbinger guides reflect less esoteric hopes, more CUDA kernel efficiency ground through GPGPU constraints. Deployment resolves as partitions manage memory overflow and computational pipeline Fair Play prioritization. Bid complexity erraticism farewell, and embrace systematically scoped components backed by scalable APIs where deadlocks and race conditions unveil like mundane street rubbish.

The kingdom of cold tech truth depends on systematic code guardians-wise. Smother hasty presumptions bay-adjacent. Hone AHCI prowess and I2C devices judiciously. Confront mismanaged tensor flowgrams on hands-on watch duty—AI models in fraught need of firm hand correction, cycle-splitting down communication lanes. Analytics presented raw matter naught as serverless backdoor machinations echo drudgery scenes from mind-bedecked quality assurance. Welcome, architect shepherds, to this sepulchral saga.

| SaaS Wrapper | Algorithm Complexity | GPU Utilization | API Latency (ms) | Vector Database Status |

|---|---|---|---|---|

| Wrapper A | O(n^3) | 70% | 150 | Operational |

| Wrapper B | O(n log n) | 95% | 300 | Full |

| Wrapper C | O(n^2) | 82% | 250 | Failing |

| Wrapper D | O(1) | 60% | 50 | Stable |

| Wrapper E | O(n) | 40% | 75 | Corrupted |

| Wrapper F | O(n^2 log n) | 88% | 400 | Critical |

In conclusion, these AI SaaS wrappers are doomed, suffocated by their creators’ own incompetence. They will falter, squeezed by process inefficiencies, overwhelmed by the very mess they constructed. This isn’t a debate—it’s recognition of the inevitable.

3 Practical FAQs

-

Why will 90% of AI SaaS wrappers fail

Zero differentiation. Yet another wrapper over an existing API, ignoring API latency and rate-limiting. Adding a fancy UI won’t fix your O(n^2) complexity. -

Can these SaaS wrappers optimize performance

By pretending CUDA memory limits don’t exist? Running your expensive GPU tasks on bloated frameworks is not optimization, it’s negligence. -

What are better alternatives for AI services

Build robust pipelines that prioritize efficiency. Avoid vector database failures by using proven scalable designs. Fancy terms and buzzwords can’t replace engineering excellence.