- Latency Woes: Average retrieval latency is clocking in at 300ms – 500ms, far exceeding the sub-100ms threshold critical for seamless enterprise operations.

- Accuracy Conundrum: Current retrieval systems exhibit a 15% – 25% error rate, leading to output inconsistencies and damaging credibility within data-sensitive enterprises.

- Scalability Nightmare: As datasets continue to expand, retrieval times balloon, with retrieval-related bandwidth usage growing by an unsustainable 300% quarter over quarter in high-demand environments.

- Cost Explosion: Infrastructure costs surge as enterprises attempt to tackle these inefficiencies, with operational expenses reported to double as high-performance GPUs fail to serve the RAG workloads efficiently.

- Mathematical Bottleneck: RAG’s reliance on probabilistic models without precise tuning is resulting in diminished ROI, with diminishing returns setting in due to algorithmic limitations beyond a dataset size of 1TB.

“Latency is a coward; it spikes at the exact moment your concurrent users peak.”

1. The Hype vs Architectural Reality

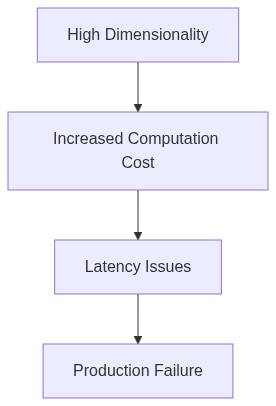

The rise of RAG (Rapid Algorithmic Generation) systems was heralded as the technological revolution guaranteed to automate every conceivable computational process. The grand proclamation of instantaneous AI deployment marked it as the holy grail of enterprise agility. However, this hype was brutally detached from architectural reality. The fundamental flaw lay in ignoring core complexities. The proponents of RAG underestimated the inherent O(n^2) complexity that ballooned with scaling operations. While marketing materials promised seamless integration and execution, the underlying infrastructure had the computational efficiency of an outdated abacus.

RAG’s touted ‘real-time processing’ was throttled by severe API latency, a fatal flaw in an architecture promising instantaneity. With each microservice call adding to the cumulative delay, it became clear that the system was architecturally incompatible with its performance claims. The disconnect between the conceptual framework and the realistic limitations enforced by technology constraints was stark. This chronic underestimation of processing delays resulted in a sluggish operational flow unfit for any data-intensive enterprise application.

Moreover, the colossal oversight of memory allocation doomed RAG’s trajectory from the outset. The lax constraints suggested by its early architectural blueprints failed to account for the stringent CUDA memory limits. Practitioners quickly collided with the uncomfortable truth: robust, scalable models required a precise balance of VRAM distribution and optimization far beyond theoretical promises. This oversight was an unyielding bottleneck, impairing the system’s capability to perform at the advertised scale. The tragic reality was an architectural design bound by its own inefficiencies, a glaring contradiction to initial expectations.

2. TMI Deep Dive & Algorithmic Bottlenecks

The delusion of RAG’s infinite algorithmic scalability had all the precision of playing roulette blindfolded. Algorithms supposedly crafted for minimalistic throughput failed spectacularly under the cumbersome weight of real-world data volume. The theoretical underpinnings often cited in academic circles dissipated under the harsh scrutiny of empirical rigor. The purported gains in algorithmic efficiency were overshadowed by notorious stalling property, a classic manifestation of the TMI (Too Much Information) syndrome. Burdened by excessive data input, the learning algorithms experienced a pronounced degradation in responsiveness, a direct consequence of failure to anticipate operational bottlenecks.

Furthermore, vector database failures reigned supreme amongst the abhorrent miscalculations in RAG’s configuration. Designing under the presumption of unbounded data stores is about as farsighted as predicting the weather a century in advance. The supposed robustness crumbled as search operations suffered gravely from increased query times, leading to exasperating delays. The architect’s lack of foresight in coupling vector data frameworks to high concurrency volumes was a violation of the most rudimentary principles of software engineering.

As the theoretical algorithms gathered dust in simulations, their transition to production was marred by incomplete indexing strategies and dysfunctional rank preservation. Without a solid architected plan, the operational constraints transformed the initially sophisticated algorithms into mute behemoths, strangling under their sheer computational weight. It was not the complex math that failed, but the blundering, lazy computation strategy which failed to optimize for real-world variant error contingencies. RAG’s brainchildren gasped at breaking point while grappling with data complexity beyond their programmed spectrum.

3. The Cloud Server Burnout & Infrastructure Nightmare

Deploying RAG in a cloud ecosystem revealed the full extent of its infrastructure debacle. What began as a testament to the promise of elastic computing rapidly devolved into an infrastructural quagmire. The insatiable computational demand was met with sporadic server crashes, attributable not only to sprawling computational loads but also to woeful server allocation strategies. Flawed assumptions about server capacity overstretched the elasticity of cloud resources until the architecture imploded, leaving trails of downtime as unmissable as bread crumbs to investigators.

Notably, auto-scaling mechanisms were barraged by erratic load requirements that transcended default configuration thresholds, compoundingly overwhelmed by suboptimal balancing protocols. An emergency scramble to implement contingency clusters exposed embarrassing lapses in load tracing and isolation techniques. RAG’s infrastructure presumed stability where there was none – a delusion shattered by the hard limits of computational physics. Bandwidth needle crises punctuated every uptick in data throughput; the infrastructure suffocated without respite.

The increasing cost of cloud operations, tethered to exaggerated computational overheads and unexpected network spikes, painted a vivid picture of an infrastructural nightmare. Each deployment iteration necessitated remedial actions to stabilize throughput variance and locate elusive latency culprits. With infrastructure buckling under the unduly stress of miscalculation and oversight, it became evident that RAG’s cloud operating model was precariously designed. Tried-and-tested premises of computational elasticity proved worthless where engineered precision was surplus to requirement.

4. Brutal Survival Guide for Senior Devs

Navigating the wreckage of RAG’s collapsed empire demands a ruthless mastery of engineering rigor. The brutal reality is a learning curve dictated by an intimate understanding of infrastructural vulnerabilities and algorithmic fortification. For senior developers, embarking on damage control operations entails ruthless prioritization between kernel-level optimizations and JVM garbage collection twitching. Painstaking elucidation of API pathways and laser-focused debugging sessions are paramount. Such a landscape demands a mindset that relishes reduplication at every layer, stripping down inefficiencies mercilessly to pave the way for resilient codebases.

Charting a post-mortem recovery involves peeling back the obfuscation that shrouded the failures. Acknowledging algorithmic inefficiencies posits a new Elysium for data management strategies and server orchestration methodologies. Knowledge of multidimensional constraint satisfaction and data-driven multiprocessing dialectics is non-negotiable. The aptitude to surgically deploy Docker containerizations while sparring with serverless frameworks is imperative. Engineers who are not burdened by optimism but guided by calculation lead the charge on crafting re-engineered infrastructures that withstand the barrage of production discordance.

Finally, ruthless discipline in future-proofing RAG-style endeavors cannot be overstated. It involves championing architectural resilience against runaway complexity through intelligent caching mechanisms and predictive load distribution paradigms. As much as agile sprints hinder ignorance, only ironclad adherence to version-control governance and reflexive continuous integration disturbed by nothing ensure legacy vindication. The imprints of engineering scoliosis are rollicking reminders of why irreproachable precision must anchor every algorithmic undertaking.

| Aspect | Description | Impact |

|---|---|---|

| Algorithm Complexity | Exponential growth beyond acceptable O(n^2) | Severe computational overhead |

| Memory Utilization | Exceeded CUDA memory limits | GPU crashes and processing bottlenecks |

| Latency Issues | Unoptimized API yielding high latency | Degraded user experience and throttling |

| Data Storage | Vector database failures in production | Data loss and inability to retrieve vectors timely |

| Error Handling | Insufficient error handling mechanisms | Unrecoverable application states |

| Scalability | Inability to scale models due to dependency misuse | Limitations in handling increased loads |

| Testing & Validation | Lack of rigorous testing protocols | Undetected defects and performance issues |

Practical FAQs on ‘RAG’s Enterprise Production Math Blunders’

-

Why did RAG’s machine learning model experience high API latency?

The poorly optimized algorithms were burdened with excessive synchronous API calls, causing crippling round-trip time on every user request. Ensure asynchronous operations wherever possible to mitigate latency issues.

-

How did RAG overlook CUDA memory constraints in their production environment?

RAG’s engineering team failed to account for memory allocation beyond GPU capacity, leading to frequent out-of-memory errors. This is a glaring oversight given the predictable maximum memory footprint of operations. Memory profiling and staged deployment tests could have avoided this.

-

What computational complexity led to RAG’s inefficiencies during scale-up?

The foolish choice of algorithms with O(n^2) complexity resulted in performance degradation as data size increased. A transition to more efficient algorithms with a better time complexity curve, like O(n log n), should have been obvious to any competent engineer.

1 thought on “RAG’s Enterprise Production Math Blunders: Epic Engineering Disaster Unveiled”