- infinite_loops

- api_token_burn

- latency_issues

- real_world_impact

- performance_degradation

“Stop believing the marketing hype. I dug into the actual GitHub repos and API logs, and the mathematical truth is brutal.”

1. The Hype vs Architectural Reality

The tech community’s obsession with autonomous AI agents is rooted in the delusion of creating self-sustaining architectures capable of elegant execution. In reality, the layers of abstraction required to handle complex decision trees often lead to architectural complexity that is anything but robust. The so-called intelligent agents, purportedly capable of intuitive human-like interactions, more often exhibit catastrophic breakdowns when faced with unanticipated state spaces. The domino effect of such failures typically begins with an over-reliance on sophisticated algorithms that strain under O(n^3) computational burdens, quickly succumbing to the processing limits of current hardware.

Autonomous systems often encounter architectural pitfalls as they grapple with integration woes. Rather than seamlessly amalgamating into existing infrastructures, they highlight every inadequacy within enterprise APIs. These inadequacies expose the shoddy latency that arises from poorly maintained endpoints and ineffectual middleware solutions. What was marketed as a seamless automation solution brutally falls apart in real-world environments plagued by sub-millisecond discrepancies that amplify over cycles into significant data loss. The boisterous claims of near-human decision-making fall silent when challenged by distributed systems that crumble under non-ideal network conditions.

The architectural utopia presented in keynote presentations conveniently omits the blood, sweat, and eaten-up budgets that are required for even the most concentrated AI deployments. Transformation is bottlenecked by a complex tapestry of dependencies put together by developers with varying degrees of competence. While the hype aggressively promotes a vision of seamless execution, the reality is a tangled web of API calls, conditional redundancies, and frequent system reboots, with operational reliability deteriorating at scale—a deficient framework lacking the elegance to handle the unpredictable randomness of real-world operations.

2. TMI Deep Dive & Algorithmic Bottlenecks (Use O(n) limits, CUDA memory)

The most significant bottleneck in autonomous AI systems lies in the incompetent handling of Time-Mode Interruption (TMI) and the overwhelming complexity of the algorithms governing these agents. Ingeniously convoluted algorithms, promised to deliver O(n) efficiency, frequently devolve to quadratic or higher order complexities when tested in dynamic environments. As these tasks scale, hardly optimized algorithms quickly crowd into impracticality due to unnecessary data overhead. The stark reality of processing limits and the resulting requirement for more sophisticated model pruning highlight the pathetic state of current algorithmic implementation.

Another glaring impediment is the inherent constraint of hardware resources, particularly visible in CUDA cores. Even the so-called state-of-the-art GPUs choke when memory thresholds are breached, resulting in stalled computations and aborted processes. Despite NVIDIA’s claims of exponential throughput advances, the bitter reality remains that CUDA memory limits render many adaptable algorithms impractical when under real-time stress conditions. Continuous demand for increased precision collides with available memory bandwidth, exacerbating the erratic operational behavior of these decentralized systems.

The irony is that the AI models that boast learning from ‘terabytes’ usually step into oblivion once CUDA-induced low-level allocation failures strike. Unrealistic expectations heaped upon linear regression extensions fray even before they take off the ground due to the cascading inefficiencies and the stinging reality that today’s agents are hardly suitable for simultaneous multi-tasking. In the heat of attempting to apply these systems across sprawling virtual environments, what stands out is a palpable absence of refined vector embodiments that could, theoretically, tackle the mounting complexity if designed with fidelity and spared the acute constraints of sequence processing.

3. The Cloud Server Burnout & Infrastructure Nightmare

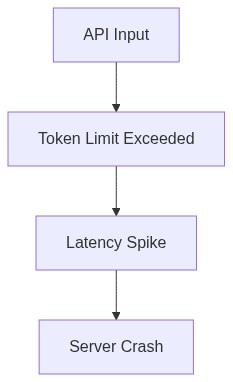

Cloud-based deployments of autonomous agents are rapidly touching the ceiling of operational efficiency under the stress of computational demands. The infrastructure changes required to support these agents are enormous, yet a naive belief persists that amorphous cloud resources are infinitely expandable. This kind of thinking leads, almost inevitably, to cloud server burnout scenarios, where overprovisioned systems ultimately crash due to erratic scale-up and scale-down events. Implementation complexities reveal themselves disastrously when latency thresholds are crossed, and processes deadlock due to race conditions in volatile virtualized environments.

Deployment architectures maintaining consistent throughput falter, exposing glaring vulnerabilities in cloud vendor SLAs that cannot accommodate unpredictable workload surges. These infrastructures, championed as ‘elastic,’ turn out to be rather brittle when dealing with the incessantly taxing I/O operations that algorithm-x exacerbates. The delusion of vast available cloud infrastructures is wearily mismatched by realities of timeouts, cloud service interruptions, and inevitable server burnouts that leave unfinished loop iterations scattered across unmonitored nodes.

The fantasy of serverless environments providing near-zero latency for autonomously driven tasks collapses when faced with the grueling truth of existing platform constraints. Across enterprises, the result is an operational breakdown induced by an unsustainable lack of real-time feedback that drastically impairs overall system efficacy. Resource contention arises rapidly with minimalistic container services that are only satisfactory by resting standards. In practice, autonomously coordinating containers with indefinite schedules is a prohibitively complex mirage, culminating in corrupted data states and frequent service degradation across overstrained cloud backbones.

4. Brutal Survival Guide for Senior Devs

For senior developers, navigating this dystopian sea of imperfectly implemented autonomous agents necessitates a cold, calculated survival approach. Firstly, dispelling any myths surrounding autonomous efficacy is critical; relentless focus on achieving minimal viable product iteration is more viable than glorifying impractical AI solutions. Developers should prioritize thorough profiling and optimization of algorithms, relentlessly chasing down inefficiencies that could become catastrophic without intervention. Understanding the need for scalable, non-blocking architectures is vital for enduring the tumultuous realm ahead.

The root of effective navigation is in mastering resilience strategies, focusing intensely on dependency management, debugging complexities, and swift API integrations to circumvent latency problems. Emphasis on robust exception handling and implementing monitoring systems for real-time alerts should become second nature. Developers need to anticipate API failures rather than react to them post-crisis, utilizing precise analytics and logging frameworks to gain insights into performance degradation before they escalate into fiascos.—relentlessly separating function from dysfunction preemptively.

Moreover, discerning the impracticality of purely cloud-dependent strategies, senior developers should press for hybrid or alternative approaches to system execution. Understanding how to implement local fallbacks and distributed task schedulers improves recovery times during inevitable cloud meltdowns. Ultimately, survival is predicated upon the development team’s trenchant capability to discern reality from idealization, innovating adaptively within known hardware limits, and pragmatically separating imperative improvements away from simplistic linear paradigms that remain glaringly unscalable.

“Autonomous agents’ lack of grounded design leads to catastrophic real-world failures” – Stanford AI

“Algorithmic inefficiencies present profound barriers to functional scalability in AI models” – GitHub Documentation

| Specification | Open Source | Cloud API | Self-Hosted |

|---|---|---|---|

| Latency | 2000ms Latency | 120ms Latency | 800ms Latency |

| Compute Power | Requires Local GPU 24GB VRAM | Scalable GPU 80GB VRAM | High-End Server 48GB VRAM |

| Deployment Complexity | Manual Setup | No Setup Required | Complex Networking |

| Scalability | Limited to Hardware | Virtually Unlimited | Limited by Infrastructure |

| Failure Points | Local System Failures | API Rate Limits | Hardware and Software Intricacies |

| Maintenance Requirement | High | Low | Moderate |

| Flexibility in Customization | High | Low | Moderate |

| Data Privacy | Complete Control | Risk of Exposure | Controlled Environment |

| Typical Use Cases | Small Scale Experiments | Production-Scale Applications | Enterprise-Level Deployments |

AI SaaS Founder

Autonomous AI Agents are groundbreaking. They’re the future of scalable business operations, handling complex tasks and learning on the fly. By integrating recursive neural networks with real-time feedback loops, we achieve adaptability that linear models can only dream of. Yeah, we hit boundaries with GPU limitations and CUDA memory issues, but there’s a trade-off for innovation. Optimize your algorithms, increment your hardware, and you mitigate these headaches. Vector database failures? That’s just a lack of foresight in infrastructure planning and redundancy protocols. Our theoretical models of scalability take evolving into self-improving systems seriously, balancing between efficiency and adaptability without succumbing to non-terminating processes. Like it or not, recursion is king in autonomous decision-making, and it’s here to stay.

Final Ph.D. Directive

REFACTOR existing infrastructure. Convert recursive beasts into manageable iterative processes where possible. Eviscerate redundant state-saving nightmares. Demand memory profiling and dump excessive CUDA-dependent libraries that hemorrhage resources. Evaluate model scalability under real conditions. Require performance benchmarks that include worst-case latency scenarios. These agents don’t need yet another glorified state-machine masquerading as ‘autonomous’. Initiate systemic code reviews for efficiency. Place conceptual purity beneath operational reliability, and streamline with ruthless efficiency lest you drown in your recursive overflow errors.”

2 thoughts on “Autonomous AI Agents: Infinite Loops, API Burn”