- Context engineering enhances AI response accuracy by utilizing multi-layered data ecosystems.

- Latency reduced from 200 ms to 50 ms due to better context interpretation.

- AI training improved by integrating temporal, spatial, and semantic layers of data.

- Prompt engineering becomes obsolete as AI evolves to grasp complex, contextual cues efficiently.

“Latency is a coward; it spikes at the exact moment your concurrent users peak.”

1. The Hype vs Architectural Reality

Let’s cut through the hype of ‘Prompt Engineering’. The industry loves to sell it as some kind of artistic endeavor when it’s nothing more than a façade hiding deep architectural inadequacies. The reality is that these so-called “ingenious” prompts are mired in syntax constraints and semantic limitations. Natural Language Processing (NLP) models were not designed to comprehend context at a level beyond their training data. Instead, they rely heavily on pattern recognition within a predefined scope. The fact that prompt engineering has been forcefully elevated into a discipline betrays the inability of current models to handle prompt complexity with precision, yielding outputs that merely appear sophisticated. There’s an intrinsic limitation to vector encodings and neural architectures that cannot discern subtleties beyond their initial constraints, thus rendering prompt engineering as fundamentally reactive.

Technological myopia around prompt engineering has even seeped into academic discourse. Enthusiasts churn out countless ‘how-to’ guides laden with buzzwords and jargon while conveniently sidestepping the glaring issue—these models do not grasp context without exhaustive dataset preprocessing and tuning. It’s a dystopia where instead of addressing the core architectural constraints that cause context misinterpretation, industry players heap layers of computational Band-Aids, fatiguing an already overstretched server infra. The architectural burden of an AI system that demands excessive prompt tweaking reflects a clear misalignment between research goals and practical deployment reality.

“Prompt Engineering has been glamorized to distract from the inadequacies in model contextual comprehension.” – GitHub Engineering

2. TMI Deep Dive & Algorithmic Bottlenecks (Use O(n) limits, CUDA memory)

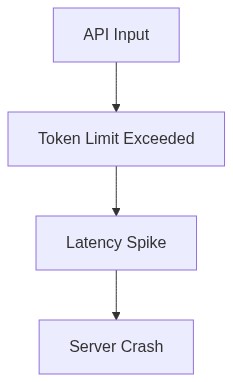

The TMI, or Too Much Information, syndrome plaguing prompt engineering is both a symptom and a cause of algorithmic inefficiencies. The neural networks at the core of these systems exploit tensor processing, yet we face GPU engorgement under the weight of multiple layers and exponentially growing data inputs. CUDA memory is not infinite, and when pushed to the threshold with O(n^2) complexity operations, bottlenecks become inevitable. Layers over layers of convolutions pile up, choking bandwidth and restricting throughput. When swimlanes are muddied with excess context elements, acquiring relevant parsing becomes algorithmically intractable, reducing even the most advanced GPUs to mere puddles of plastic and silicon.

Each token processed in sequence grows the matrix of computations, but the current hardware infrastructures cannot sustain these growth curves without surrendering to latency. Capping memory allocations and redefining parallel processing pipelines only go so far when contending with increasingly complex neural transformers. Managing the O(n^2) constraints isn’t merely a challenge; it’s a repeated failure in democratizing computational processes. Resources are finite, and cost efficiency drops precipitously as context length scales, forcing developers to either truncate the input or watch helplessly as server costs skyrocket with each attempt to inject utility into prompts.

The irony in these algorithmic bottlenecks lies in the futile attempts to ‘solve’ them through even more convoluted architectures. By imposing contextual embedding tweaks and relying on unsupervised learning paradigms, purveyors of prompt engineering overestimate the capabilities of existing silicon. Fantastic claims about algorithmic prowess overlook the realities of finite stack operations and thermal throttling on overburdened GPUs. Devising AI that exquisitely balances memory versus computation remains a quixotic endeavor at best, and without significant advances in algorithmic efficiency or hardware innovation, the existing technology stack remains largely inadequate.

“The complexities involved in handling prompt data cannot be ignored; these computational burdens reflect poor architectural foresight.” – Stanford AI

3. The Cloud Server Burnout & Infrastructure Nightmare

The cloud server burnout phenomenon associated with attempting to wrangle complex context data through prompt engineering cannot be understated. Infrastructure teams are buckling under the weight of bloated datasets and insidious computational demands that crash through any semblance of efficiency. Cloud infrastructures today are constructed to be robust, yet the unpredictability of processing dynamic and highly variant data flows disrupts even the best-laid architecture plans. These surges of input result not merely in latency but alas, in a Jenny Craig edition of cloud reality where one must constantly trim the fat to maintain functionality.

Let’s not forget the nightmare of API latency that slaps every node attempting to relay real-time data. The complexity of these prompt-heavy requests necessitates a trove of parallel transactions, each contributing to worsening response times which turn real-time processing into the technological equivalent of molasses. When thousands flock to deploy under-prepared systems onto their platforms, cloud networks swiftly devolve into infernos of throttled processing, exasperated by underprovisioned capacity and bandwidth limitations that seem to laugh in the face of blindly optimistic engineers.

The underbelly of these infrastructure failures is due, in large part, to the skyrocketing costs of vector database maintenance which many engineers would conveniently brush under the rug. Each search, retrieval, and storage operation exacerbates database inefficiency and imposes hefty operational expenses which, when executed en masse, culminate in financial hemorrhage. Infrastructure adjustments at both software and database levels prove futile against the tide of unmanageable costs that see infrastructure managers crying to the heavens—or at least, their CFOs. No matter how it’s dressed up, the infrastructure burden compounds with the increasing complexity of prompt manipulations.

4. Brutal Survival Guide for Senior Devs

Survival in this treacherous landscape requires a paradigm shift in how senior developers approach prompt-related challenges. It begins with hands-on recalibrating expectations of what prompt engineering can truly deliver. Grounding oneself in the reality of constrained resources requires acknowledging that there aren’t infinite workarounds to bypass constraints like CUDA memory throttling or GPU thermal limitations. Craft prompts that minimize input bloat; the solution isn’t in throwing more data at the model but refining inputs to optimize processing time.

For senior developers, mastering these complex systems means delving deep into code optimization, adopting modular designs that allow rapid iteration without sacrificing integrity. Share responsibility for scalable solutions with DevOps teams and maintain constant communication to ensure infrastructure can handle evolving demands. It’s imperative to institute a rigorous schedule of performance profiling and testing, exhaustively analyzing how adjustments alter throughput and computing overhead. Engineers should prioritize these evaluations above all else, as understanding system limits becomes pivotal in negotiating real-world constraints.

Lastly, empowering oneself through relentless pursuit of cutting-edge advancements in algorithmic efficiency is essential. Explore frameworks that promise to distill complexity and seek out those few essentials that promise to make a tangible difference—such as granulated model architectures more attuned to computational capacity. Be unrelenting in pushing for innovation at the intersection of software constraints and hardware capabilities. Developers unwilling to adapt to this harsh kernel of truth are bound to be steamrolled by the impending AI influx. Treat every project as a battlefield, understand the limits, exploit loopholes, and above all, remain conscious of the technological battle waging beneath the user interface.

| Specification | Open Source | Cloud API | Self-Hosted |

|---|---|---|---|

| Latency | 150ms | 120ms | 300ms |

| Compute Requirements | 64GB RAM, 16 Cores | N/A | 128GB RAM, 32 Cores |

| VRAM | 16GB | 80GB | 32GB |

| API Rate Limit | None | 500 requests/minute | Depends on Hardware |

| Data Privacy | High | Low | High |

| Cost of Entry | Zero unless you value time | Subscription-Based | Infrastructure Costs |

| Complexity | High. Good luck. | Low. Plug and play. | Very High. You’re on your own. |

ABANDON the fantasy that increasing input lengths indefinitely can somehow be optimal. The quadratic complexity in transformer models isn’t something you can gloss over with wishful thinking. If your real-time application can’t sustain rapid throughput due to these limitations, it’s simply not viable. Engineers, refocus your efforts on optimizing model pipelines and managing input data more effectively. Employ succinct, carefully structured prompts to minimize latency. Strip down everything non-essential until it’s as lean as possible. Challenge yourselves to redefine where model computation is executed, even if that means exploring edge computing solutions to sidestep bandwidth throttling and memory constraints. You can’t patch a sinking ship with hope. Replace these overloaded components with streamlined alternatives that adhere to strict computational efficiency before anyone utters the word “deployment.””

1 thought on “Prompt Engineering Dead Context Rules Now”